In Defense of AI Slop

How can we reckon with the usefulness of LLMs?

Reading the internet is an exercise in the olfactory department. Every funding post on LinkedIn, every overly confessional Substack essay, reeks faintly of digital manure. AI slop is stinking up the web.

The writing it offers is smoothbrained, uninteresting, limp-dicked drivel. I hate how it is changing so much of my online experience. And, in a sign of how incentives influence beliefs, I hate how it makes the market for my newsletter so much more competitive.

This frustration is why this next sentence pains me so much to type. I don’t think it’s all that bad. Ugh.

Please do stay with me here, because I think “AI slop” is, ironically, itself a sloppy term.

The writing AI produces, when used properly, is stupid useful. We have been calling many different things “slop” as if they were the same thing. They aren’t! And that conflation is hiding from us the truth of what is happening to knowledge work. I’m not arguing AI slop is good. The word is simply doing too much work. This reluctant belief is driven by two realities:

On the micro level, the writing that Claude generates for me is the most performant text in my life. It pulls data, answers research questions, writes code, and gives feedback on my drafts. It has allowed me to scale this business all while keeping my expenses hilariously low.

On the macro level, evidence is mounting that people will pay for AI slop. Even the supposedly sophisticated buyers in categories like business writing love the stuff. 25% of the Business Substack leaderboard is written by AI. Two of the top ten are fully synthetic. Their subscribers like the LLM’s output enough to slap down a credit card, and these publications are earning millions a year. They are, demonstrably, kicking my ass at making money with a media company.

How can this possibly be?

Substack is infected with AI

I wanted to be analytically rigorous with my hatred of AI slop. So rather than rely on intuition, I used math. I pulled the ten most recent posts from the top fifty publications across a sample of Substack Bestseller categories and ran every one through Pangram, an AI detection tool that gave me research access to their API for this essay. This work was not sponsored or influenced by them — they’ve previously sponsored this newsletter, which is how I got the research access, and I’d guess they strongly disagree with my conclusion. Taylor Lorenz ran a similar analysis a few weeks ago that piqued my interest in the topic.

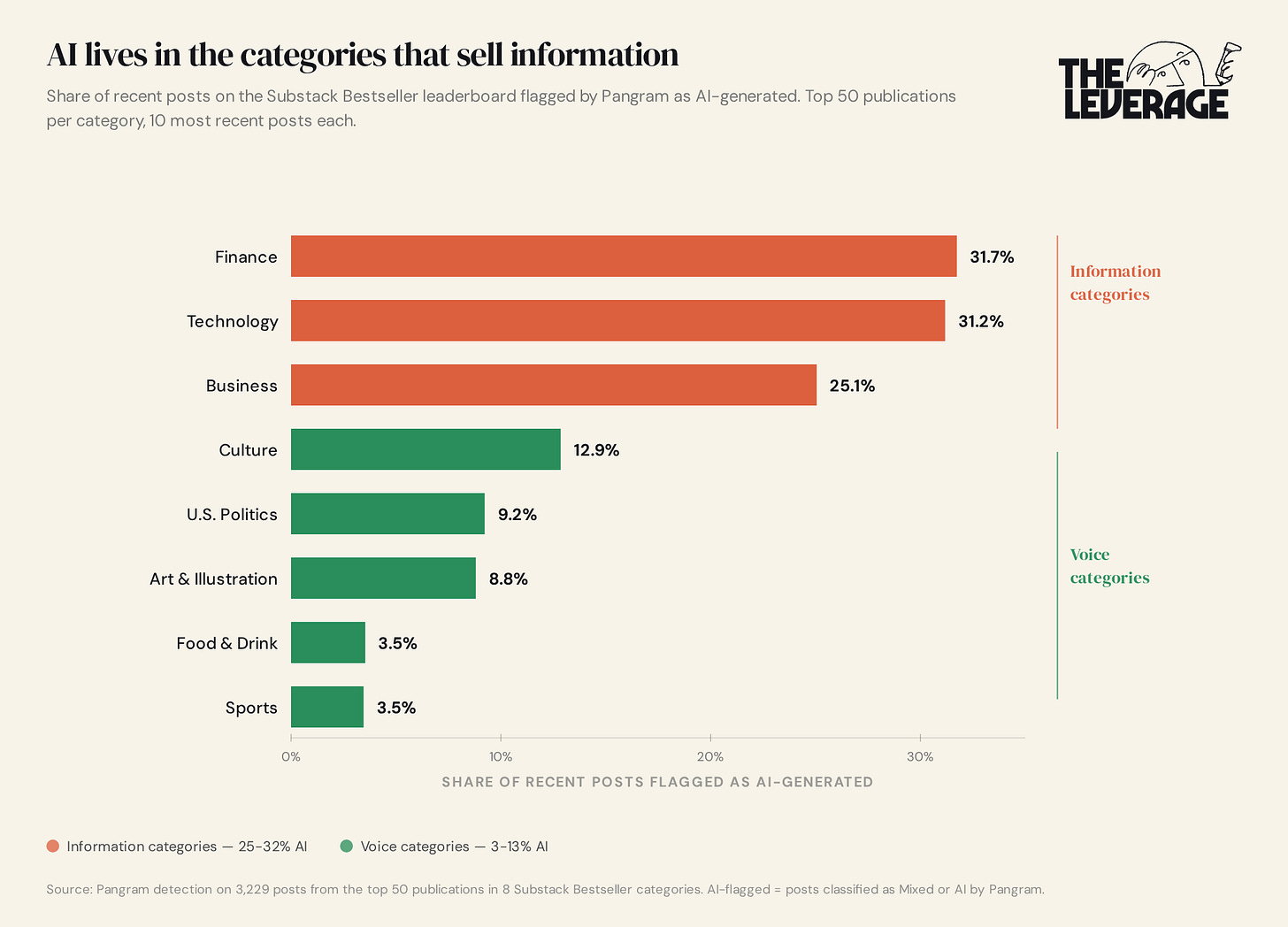

The first thing the data shows is that AI on Substack isn’t evenly distributed.

The categories split into two clusters. Tech, Finance, Business — primarily information categories — are 25-32% AI-flagged. Sports, Food, Politics, Art — voice categories — are 3-9%. I don’t think this is an early adopter thing or a temporary blip. AI writing eats categories that sell information. It does not eat categories that sell voice. (I’ll come back to why.)

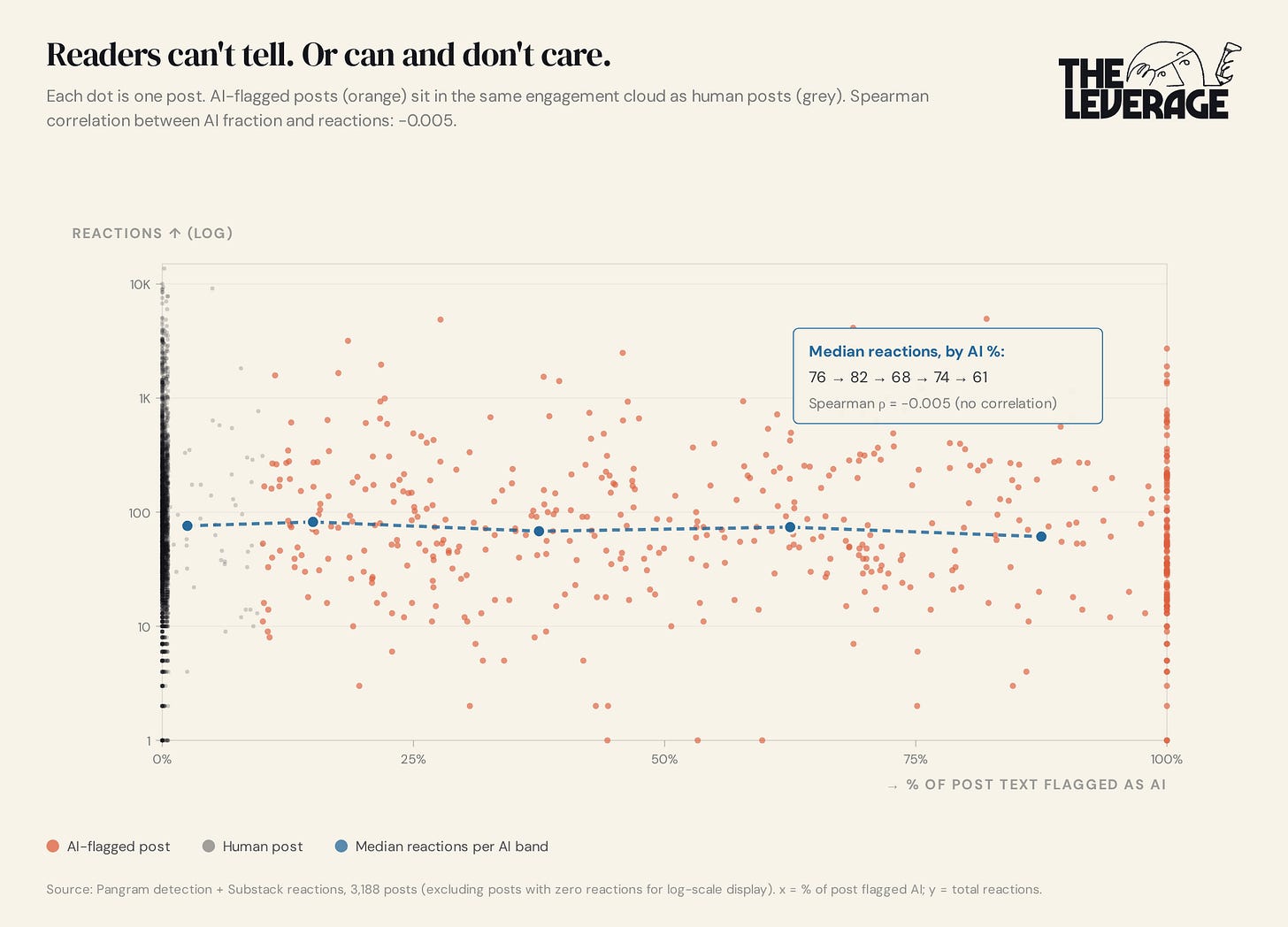

The second thing the data shows is that readers don’t seem to be punishing the AI-flagged posts.

Each dot on this chart is one post. Orange dots are AI-flagged while grey dots are human. The blue line tracks median reactions across AI bands — and it’s flat. The correlation between the percent of a post that’s AI and the number of reactions it gets is negative 0.005. Statistical zilch.

In non-nerd speak: A Substack post that’s 100% AI gets, on average, about as many reactions as a post that’s 0% AI. This fundamentally should make us reevaluate the “AI slop is bad” thesis. The readers of the most popular newsletters in the entire world can’t tell the slop from the rest. Or they can tell and they don’t care. Both are bad news for the people who thought the market would self-correct.

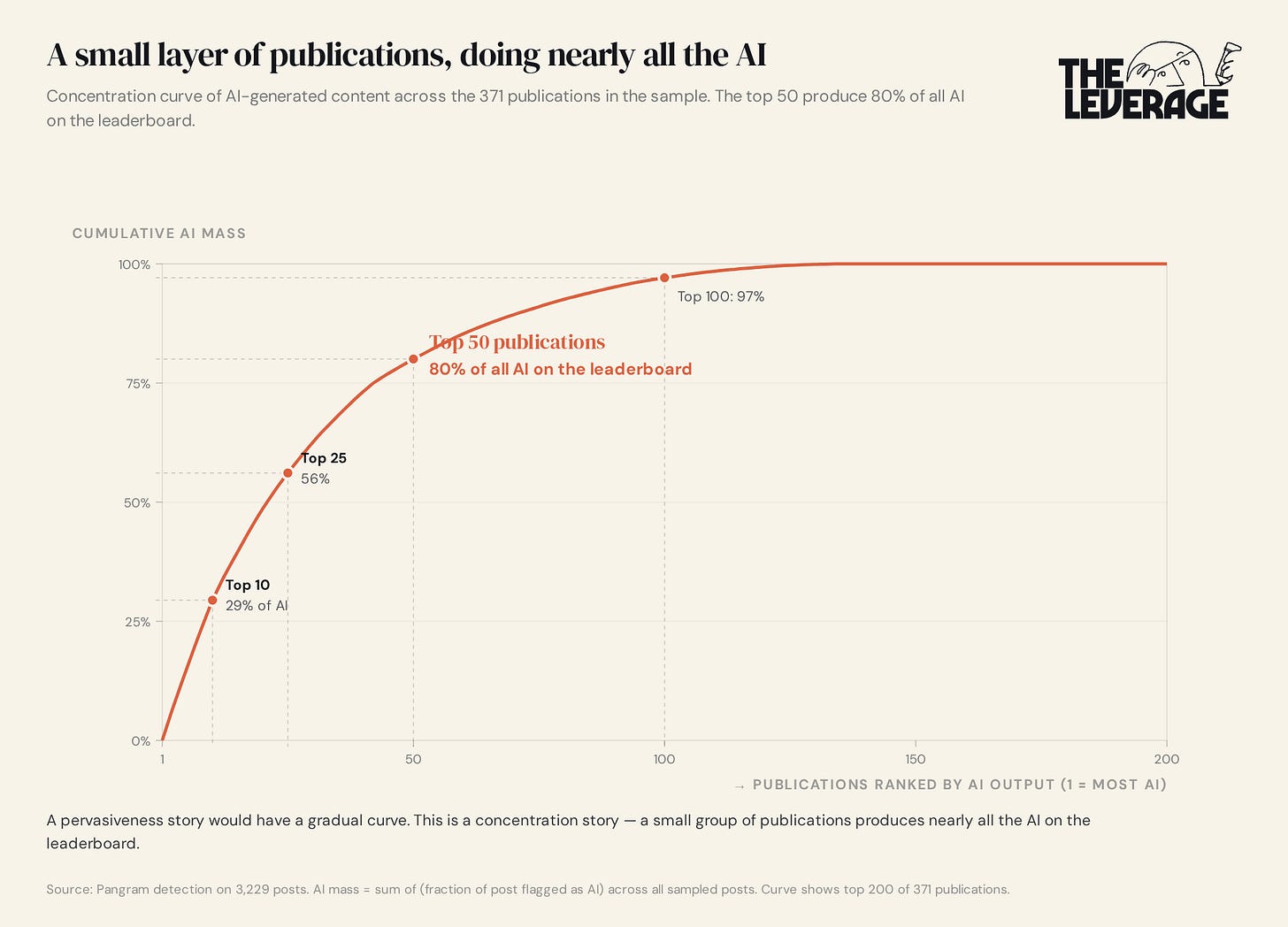

The third thing is that this is not a pervasive phenomenon, but is instead concentrated in a few publications.

Of the 371 publications in the sample, the top 50 produce 80% of all the AI on the leaderboard. Most newsletters are clean. A small intense layer of publications — two dozen of them publishing nearly fully synthetic text on every post — is doing nearly all the AI publishing in the dataset, and they’re sitting comfortably on the leaderboard alongside the human ones. Two of those publications are in the top ten of their category. These are not small businesses.

It would be simpler if I could simply wave this off as the reading habits of the uncouth, uncultured, unshowered masses whose favorite book is the Da Vinci Code. Unfortunately, I have found myself increasingly loving AI slop.

I need to be honest

Before I go further, I owe you a confession.

The charts you just looked at? I didn’t make them. Claude did. I described the Pareto curve and correlation charts. Then, Claude wrote the SVG, fact-checked every number against the source CSV, and rendered the PNGs in The Leverage’s brand colors. I rewrote a chart title a few times because the framing felt slightly off and that is about it. Saved me three hours of menial data labor.

The Pangram results that anchor this entire essay? Yup, I didn’t pull those either. I told Claude Code what I needed, gave it access to Pangram’s API, reviewed/approved its plans, and told it to drop the results into a single CSV. While it worked, I ate a mango. By the time I came back, 3,229 posts were classified and the data was sitting on my desktop.

Even the structure of the piece you’re reading right now is the same story. Paywalls have always been the hardest part of this newsletter for me. I wrote a draft of this piece where I could tell the paywall didn’t accurately set up the dramatic stakes of this question. I described the problem to Claude, and we worked through seven different revisions together. The structural order of the section you are reading right now is a version Claude argued me into.

I am telling you all of this because I cannot, in good conscience, write a piece arguing about AI slop without admitting that this piece exists in the form you are reading because of AI tools. Is this essay AI slop? I don’t think so, but some of my more militant anti-AI readers would say that it is.

This is not the same as saying AI slop is the best writing I encounter. The classics are better. Kierkegaard is better yet. But for the jobs I actually use writing to do, LLM prose is the highest-performing tool I have. And “highest-performing” is what best means in a functional context, even when it isn’t what best means in an aesthetic or soulful one.

AI writing is the best in the world

If LLM prose is the best functional writing in my life, what does it mean that the same tool produces what we’ve all agreed to call slop?

I think the answer is that this isn’t really a piece about newsletters. This is about every business whose product is “we will tell you something you didn’t know.” The Leverage is in that category. So is every research firm, every paid Substack, every consulting deck, every analyst service, every market intelligence platform, every analytics SaaS tool whose pitch is “we surface the insight you’d otherwise miss.”

An incredible amount of the world is slop. The bestsellers that line the walls at airport bookstores? Slop. Consultant’s sending over 100 slide decks arguing for something everyone already knows? Slop. Adding one more SaaS tool that promises to 2x your productivity? Slop. The world has already clearly shown that it loves slop. Monetization method doesn’t matter — subscription or ads, the world buys slop. All AI does is make the raw components of that slop cheaper. The trick is that those raw components can also be used to make products better.

There is a single test that explains who wins and loses in this world. Why some categories are getting eaten and others aren’t and why readers can’t tell the difference. And why The Leverage might be in trouble if I don’t adapt fast.

It’s the most useful test I have learned this year. I am putting it on the other side of this paywall — along with what specifically I think operators should do in the next eighteen months, and how I’m going to figure out whether The Leverage is playing the right game.

Keep reading with a 7-day free trial

Subscribe to The Leverage to keep reading this post and get 7 days of free access to the full post archives.