What’s the Bet: Pangram

Would you buy anti-slop technology?

Author’s note: This is the latest in my “What’s the Bet” series. AI has the potential to change everything, and founders have to make company-defining choices in response. That is not easy. In this series, companies pay me to analyze their bet and explain it to you. I retain editorial independence. Today’s bet is brought to you by Pangram, a startup that has built a Chrome extension that labels AI-generated content in your feeds in real time. If you have any feedback, just reply to this email.

Pangram’s bet: people will pay $20 a month to know what’s real on the internet.

That sentence is doing a lot of work. It assumes you care that more than half the content you scroll past was written by a machine. It assumes you’d rather know than not know. It assumes that “knowing” is worth more than some Subway sandwiches. None of that is obviously true to my eye. Millions of people happily watch Italian brainrot videos for hours or consume copious amounts of reality television. Pangram is likely not for those people. Pangram is for the readers, the writers, the researchers, and the operators who are starting to feel something is genuinely wrong with their internet and want a tool that does something about it.

The product they are launching this week is a Chrome extension that labels AI content in real time as you scroll X, LinkedIn, Substack, Reddit, and Medium. There’s a sidebar that tracks how much of your feed is slop. You can right-click on any text on the web and scan it. It runs passively. The nice part is that there is no copy and pasting; the labels just show up next to posts.

This is a multi-layered bet: on technology, on internet platforms, and on the willingness of people to pay for a vaccine against slop.

Why this bet, why now

Two things have shifted in the last 18 months that make this product viable in a way it wouldn’t have been in 2024.

First, the volume of AI content crossed a threshold where you can feel it without measuring it. Pangram’s collaboration with the Internet Archive found that by mid-2025, “roughly 35% of newly published websites were classified as AI-generated or AI-assisted.” By now, I’m willing to bet that number is over 50%. You can just feel it, right? The internet feels…off.

Second, the bad actors got infrastructure. The Russian troll farms that were operated by humans during the 2016 election cycle have been replaced with locally run open-source models pushing out comments and articles at scale. NewsGuard, in partnership with Pangram, has identified more than 3,000 “AI Content Farm” sites, fake local-news domains that exist solely to launder AI content into search results, and is finding 300 to 500 new ones every month. Those operators are never going to voluntarily label their content. The labeling has to happen on the consumer’s side.

As Max Spero, Pangram’s co-founder, put it on our call, “not every individual should have to train themselves to spot AI content. That’s what tools are for.”

The technical advantage

The thing that separates Pangram from the dozen other AI detectors you’ve seen advertised is a 1-in-10,000 false positive rate. The industry standard is between 1 and 5 percent, which means roughly one in every 100 things you check gets flagged as AI when it isn’t. That sounds close to Pangram’s number if you didn’t happen to take Stats 101 in college. It is not. It is 100 times worse.

How Pangram gets these results is, ironically, through AI. They trained something called a “classifier” model, a neural net trained on millions of paired documents (human-written and AI-written) where the AI’s sole job is to learn the token-level patterns that distinguish AI writing from human work. Importantly, the model is calibrated to treat a false positive as 10 times worse than a false negative. This is a deliberate choice to protect writers. The cost is that some AI content slips through, so Pangram won’t catch every single AI post on a heavy-slop account. But it does catch enough to be directionally useful. The model also has a 50-word minimum, because shorter text doesn’t have enough signal. The first three words of a sentence from a language model could be from anything. By word 50, the patterns are clear enough to call.

Third-party evaluations from the University of Chicago and the University of Maryland, along with others compiled in Pangram’s own roundup, have validated the accuracy claims.

What I tried, what I found

As someone who has been guzzling down AI-generated text since GPT-2, I thought I had good robot-dar. Pangram taught me that I was full of shit. I can sometimes tell when something is AI. But even the classic tells, like an abundance of em dashes or repeated phrasing tics, are not actually predictive. Shoot, in my own work, I love using some of these so-called tells. In that regard, Pangram has been incredibly helpful for checking my instincts.

The tool has also caused me to reckon with my own feelings about AI. Reflexively, I feel anger when my social media feeds surface AI content. But is that fair? If I can’t tell without Pangram telling me it’s AI, then maybe how it is made matters less than I thought.

Pangram has allowed me to realize that what actually matters is the thought put into the work, the underlying animus driving the writing. As I wrote about on Tuesday, I know of multiple people who are building content companies with 100% AI-generated slop that are making millions. Those people don’t even know what they are publishing, can’t stand behind their ideas, and will burn in content hell along with the songwriters of “What Does the Fox Say.” When I see someone publishing 100% AI-generated work consistently, I can automatically dismiss them.

As I’ve disclosed many times, I do sometimes use AI as part of my writing process. 99% of my use is on the research side, where it does a great job of pulling relevant data for my analysis. However, one specific thing I am terrible at as a writer is writing a paywall hook. I simply suck. There is something in my brain that pathetically whimpers and curls up in a corner each time I try to write that last paragraph or two before the paywall hits. So, almost always, I use AI to help me make it. Sometimes I can take whole sentences from its suggestions, though most of the time I end up having to rewrite the entire thing.

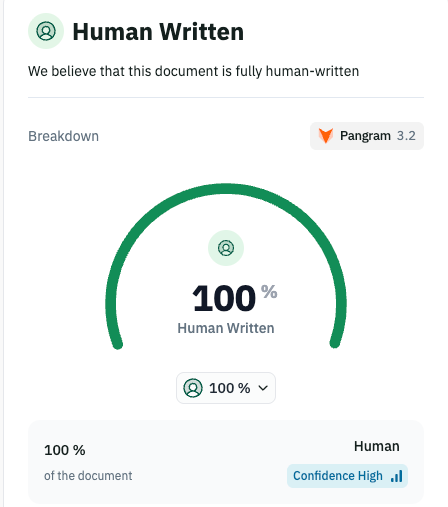

So Pangram will identify a decent chunk of my own work as “AI.” That is a label I now have to carefully reckon with, and I don’t see it as a value judgment on the product or on this publication.

Overall, the tool has been enormously helpful in helping me clean up my social media. Anytime I suspect someone of dipping too much of their prose in the AI sauce, I check what Pangram says, and then fire away with the block button. I’ll keep paying after my trial ends because I want to know where my content diet is coming from.

So how could Pangram’s bet succeed or fail?

What would prove the bet right or wrong

Right: Pangram clears 100,000 paying subscribers in the first 12 months, the brand becomes the default reference for “is this AI?” the way uBlock Origin became the default for ads, and the company expands into image and video detection. The platforms eventually adopt similar labeling under public pressure, and Pangram becomes the API layer underneath it.

Wrong: Consumers love the idea of a slop filter and won’t pay for it. Consumer is hard. The market converges on the platforms doing it for free at the 95-percent accuracy level, and Pangram’s accuracy advantage doesn’t matter to anyone outside of researchers and journalists. Or the AI models get good enough at mimicking human variance that the false positive rate ceiling moves and the moat erodes. The company must pivot its technology into the eventual place all startups go, B2B services. Customers end up being the platforms, universities, or newsrooms versus individual consumers.

The most interesting failure mode is that AI-assisted writing becomes the norm for everyone and Pangram’s labels stop feeling like a warning and start feeling like a description of how text is produced now. If your favorite Substack writer is 10 percent AI-assisted, the label tells you something true that you might not want to know. Whether that makes Pangram more valuable or less valuable is unclear to me.

The simple promise

Most AI detectors ask you to do something. You gotta go to the site, paste it in, and wait. Pangram doesn’t ask you to do anything. It just sits in your browser and tells you what your feed is made of. If the bet pays off, in two years asking “is this AI” will feel as automatic as asking “is this an ad.” If it doesn’t, we’ll all keep eating the slop and pretending we can tell the difference.

Either way, the question of what’s real on your screen is going to keep getting harder. Pangram is the most accurate answer I’ve found.

If you want to try Pangram, Leverage readers get the Chrome extension free for 1 month free use code: LEVERAGEPANGRAM

And yes, in case you were wondering, here are the Pangram results from this post.

Neal Stephenson's book, Fall; or, Dodge in Hell, kind of predicted this in 2019. In it the richest people combated slop by hiring humans to curate their Internet feeds while the rest of us had to make do with the algorithms.

I promise to keep writing my own sentences--for better or worse. It is important to me that I convey my ideas with my own language.

I'm a Firefox user so I wish LinkedIn would incorporate this technology natively.

This seems familiar (full disclosure, I work at GPTZero)

https://gptzero.me/news/ai-vision/