Finally, Proof.

The Weekend Leverage, April 5th

Perhaps the thing that gives me the greatest amount of pause about my current beliefs about AI is meeting dudes who were crypto grifters in 2022 running AI companies now. I’ve been publicly writing about this stuff for years now, and my predictions have come true, but still, you can only hear so many guys in very, very tight black t-shirts agree with you before you start to have self-doubt.

This week quieted some of that. We got something unusual—proof. Harvard and INSEAD ran a controlled experiment where 500 startups had same AI tools, but half of them were shown how other companies actually reorganized around AI. That group earned nearly 2x the revenue on 40% less capital. Google shipped a model that runs PhD-level intelligence on a gaming PC. And Coatue made the case that Anthropic will be worth $2 trillion in four years. The tight-t-shirt guys may have their life savings tied up in monkey JPEGs, but the data is starting to say they’ve got it right the second time.

Let’s get into it.

MY RESEARCH

Who’s actually paying when AI screws up? 64% of billion-dollar companies have already eaten AI-related losses averaging $4.4 million each, AI lawsuits are up 137% year over year, and every layer of the stack has lawyered its way out of responsibility. Someone is left holding the bag when AI makes mistakes. I investigated who. Read here.

How does your career survive AI? Searches for “prompt engineer” spiked 72x in three months and then collapsed to a flatline. Now everyone’s chasing “vibe coder.” Same hype cycle, same shelf life. I made a video about the career strategy that actually survives this churn. It involves electricians, a $335K Anthropic job posting, and a concept I’m calling the “plus-shaped person.” Watch here.

Everyone hates AI. Except the people building it. The backlash against AI is real and it’s getting political. I argued in my latest video that politics would play a much bigger role in business strategy this year because AI is such a hot-button issue. But most of the outrage is aimed at the wrong targets. Watch here.

WHAT MATTERED THIS WEEK?

BIG AI NATIVE

The AI-native firm genuinely does grow faster—we have proof now. For the last few weeks, I’ve been investigating what it means to be an AI native in the context of a startup or a career. This week the good folks at INSEAD and Harvard Business School helped profile what AI native operations looks like, and more importantly, what the effect of going native is.

The experiment put 515 high-growth startups in a three-month accelerator. Half the firms got standard entrepreneurship training. The other half got case studies showing how AI-native companies have reorganized production around AI.

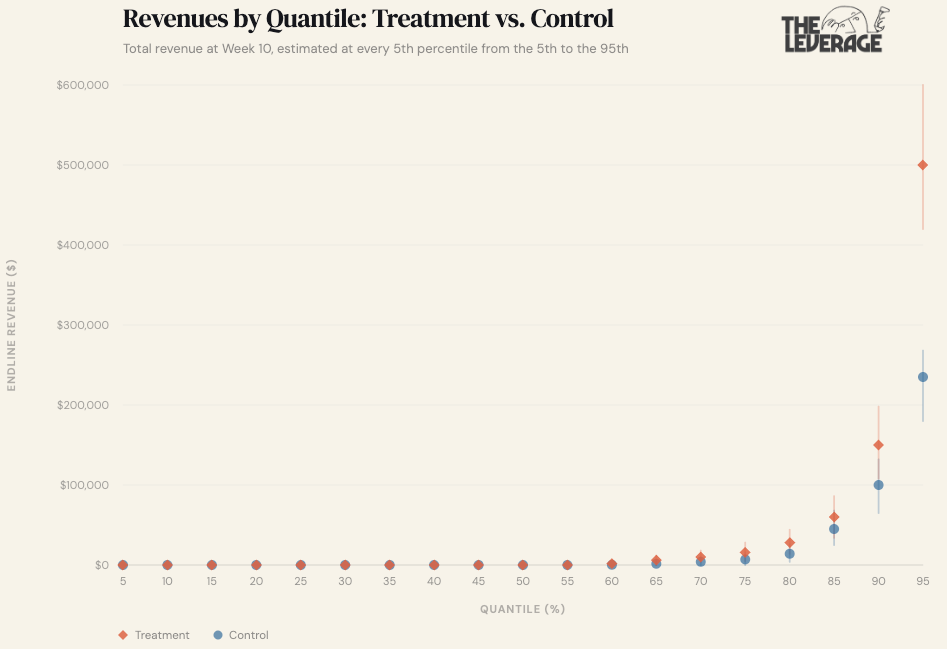

“Treated firms complete 12% more tasks, are 18% more likely to acquire paying customers, and generate 1.9x higher revenue. Revenue and investment gains are largest at the 90th percentile and above, consistent with AI expanding the upper range of what firms achieve rather than modestly improving marginal ventures. Despite faster growth, treated firms do not scale inputs proportionally. Their demand for external capital investment falls by 39.5% relative to the control group, while their demand for labor remains unchanged.” [Emphasis added].

This is about as definitive of proof as we will ever find that a company operating in an AI native way is materially different then its peers. Nearly 2x the revenue with 40% less capital requirements is what we professional analysts would call a whizpopper. A humdinger even.

Every firm in the experiment had the same technology.The bottleneck for firms attempting to go AI native is discovery—figuring out where in your production process AI creates value and how to reorganize the rest of the firm around it. Without seeing examples of how other firms reorganized, founders defaulted to obvious, low-value applications.

Transaction cost theory tells us that when coordination costs change, the optimal firm boundary changes. But this paper adds a layer in that firms can’t capture those gains until they discover the new boundary. The search for productive AI use cases itself is the friction. And it explains why most enterprises spending millions on AI licenses are seeing mediocre returns. They simply don’t know what they are doing.

One important note is that the revenue and investment gains were concentrated at the 90th percentile and above. AI isn’t lifting all boats equally. Get busy automating or get busy dying. If you are looking for places to get started you can check out some tools on how I’m doing it at The Leverage here.

BIG MOVES FOR LITTLE MODELS

Google’s new models show the future. The theory has long been that AI models would eventually get good enough to run on your own devices, for free, without sending anything to the cloud. This week we got the clearest picture yet of how that happens.

First: Gemma 4. Google released a family of AI models — free for anyone to download and run — and the flagship 31-billion-parameter version is ranking #3 among all open-source models globally, outpacing models 20 to 30 times its size on the Arena AI leaderboard. It scores 84% on PhD-level science questions and 89% on competition math — numbers that would have beaten OpenAI’s best reasoning model as recently as late 2024. Eighteen months ago, those scores required a datacenter. Now they run on a single gaming GPU!

Second: TurboQuant. This is the breakthrough most people scrolled past, and it’ll matter more than the model itself. When an AI model is thinking, it builds up a running memory of the conversation so far—called the key-value cache—and that’s what actually eats your device’s RAM during long conversations. TurboQuant compresses that working memory by 6x with no measurable loss in quality. So basically, you need way less memory, which means smaller computers can soon make AI go vroom. The market took that implication seriously. Within 24 hours, memory chip stocks like SK Hynix dropped 6%, Samsung fell 5%, and Micron slid 3.4%.

Gemma 4’s 26B model, compressed with these techniques, runs on hardware you can buy at Best Buy. A model that matches where the frontier was barely a year ago. Running locally. No subscription. No one reading your prompts. And this is the worst it will ever be — the 26B model only activates 3.8 billion parameters at a time, which is already in the range Apple’s M-series chips handle today. Within a generation or two of hardware improvements and continued compression gains, this caliber of intelligence runs on an iPhone. If you forecast these rate of improvements, I think you will roughly have AI as powerful for as the models are today running for free on a gaming computer within two years.

This is a wildly different world! If the model runs locally, the value capture has to move to data, integrations, and workflow—not inference. The API providers don’t go away, but their pricing power erodes to whatever premium “always-on, zero-latency, private” is worth to consumers. Alternatively, they have to have use cases that justify not waiting for a year or two, which is exactly why B2B is becoming such large parts of Anthropic and OpenAI’s business.

We’ve been talking about this transition theoretically for years. This week, it stopped being hypothetical.

BIG MULTIPLE

Is Anthropic at $2 trillion in four years nuts? Friend of the publication, Eric Newcomer scooped that Coatue is projecting Anthropic at a $2 trillion valuation by 2030.

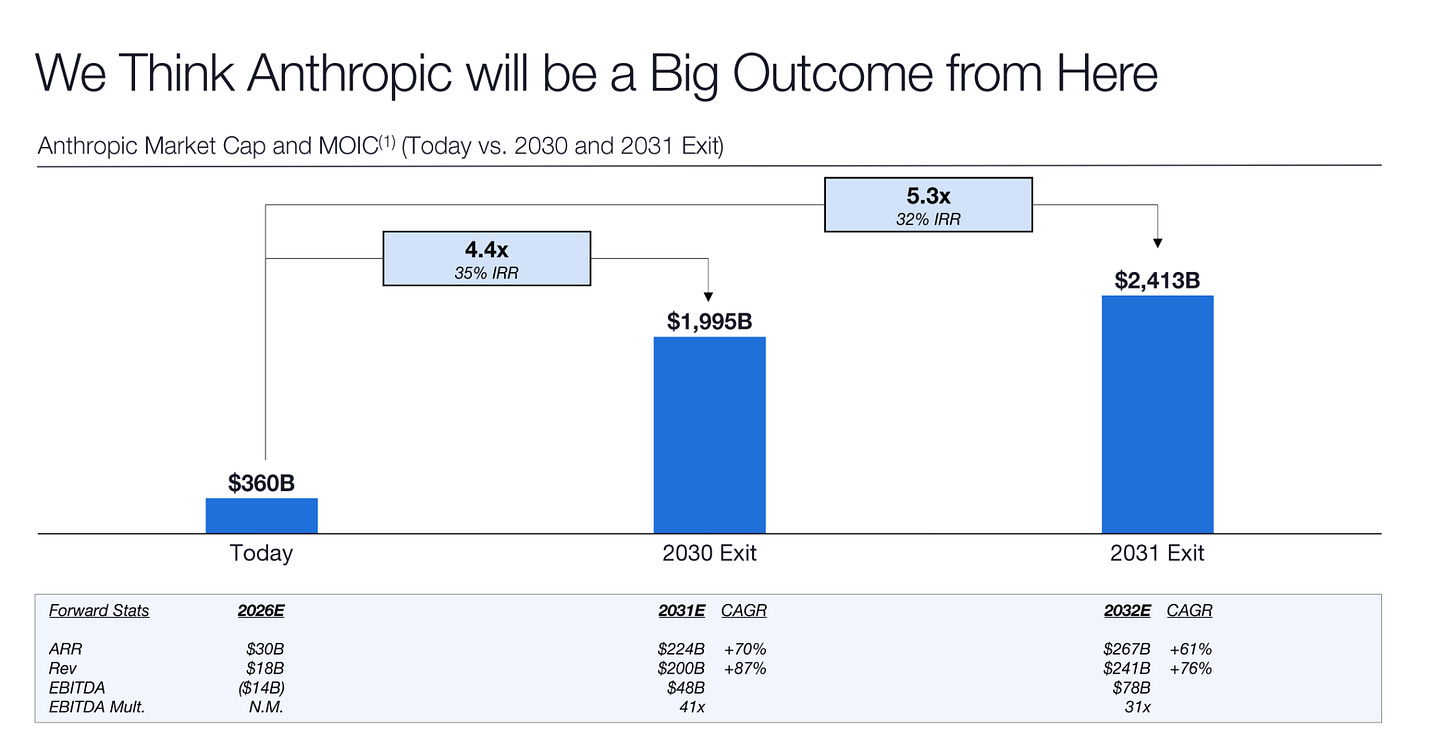

Here’s the key slide:

Coatue’s January 2026 presentation, leaked by Newcomer, lays out a path to a $1.995 trillion valuation for Anthropic by 2030 and $2.4 trillion by 2031. They co-led the $30 billion Series G at a $380 billion valuation in February, so they’re not speculating from the cheap seats. They have their well-moisturized, French skin in this.

So, let’s do the napkin math on what has to be true for that to not be insane.

Question 1: Is $200B in 2031 revenue plausible?

Anthropic is at $19B ARR as of March 2026. To reach $200B by 2031, they need to grow revenue roughly 10.5x over five years, which is a ~60% CAGR. For context, they grew from $1B to $19B in fifteen months. That’s a 19x in just over a year. So 10.5x in five years actually requires them to decelerate dramatically. The growth rate math is surprisingly conservative given the current trajectory.

But trajectories don’t compound forever. The question is whether the market is big enough. $200B in annual revenue would make Anthropic roughly the size of Alphabet’s current annual revenue. Is the market for AI models, API access, coding tools, and enterprise agents a Google-sized market? Probably, yes. The TAM case isn’t the hard part.

Question 2: Is a 41x EBITDA multiple defensible?

At $48B EBITDA on $200B revenue, that’s a 24% EBITDA margin. Not crazy for a high-margin software-adjacent business at scale. The 41x multiple, though, implies Anthropic is still growing fast in 2031 and the market still believes there’s another 5-10x ahead. For reference, Microsoft trades at roughly 25x forward EBITDA today, and Nvidia has traded above 40x during its AI run. So 41x is aggressive but not unprecedented for a company the market believes is still early-innings.

Question 3: What’s the bear case?

I most worry about the $48B in EBITDA. Most likely this fails because compute costs never come down fast enough, or because competition from open-source models (see: Gemma 4 above) compresses margins before they reach profitability. They’re currently burning $14B in EBITDA losses in 2026. The swing from -$14B to +$48B in five years requires both massive revenue growth AND massive margin expansion simultaneously.

The $2T number sounds insane on first contact, the way “trillion-dollar Apple” sounded insane in 2012. The revenue trajectory is actually the easy part given current momentum. The margin trajectory is where Coatue is making a genuinely bold call. They’re betting that AI model economics look more like software margins than hardware margins at scale. If that’s right, $2T is conservative. If that’s wrong, you’re holding a very expensive company that never quite figures out how to stop spending more on GPUs than it earns.

THE SLOPPENING

AI slop is in moral panic territory. On April 1st, more than 200 child advocacy organizations, including the American Federation of Teachers, the American Counseling Association, and Jonathan Haidt as a signatory, sent a letter to YouTube CEO Neal Mohan and Google CEO Sundar Pichai demanding a full ban on AI-generated content from YouTube Kids. This is a direct follow-up to YouTube’s declaration of war on AI slop back in January, which I covered at the time. Three months later, the slop is winning.

The numbers are grim.

Fairplay found that top AI slop channels targeting children earned over $4.25 million in annual revenue producing what they describe as “plotless, mesmerizing AI content.”

A New York Times investigation in March found that after watching a single CoComelon video, over 40% of recommended Shorts in a 15-minute session were AI-generated.

The coalition’s demands are pretty comprehensive. They want to label all AI content, ban it entirely from YouTube Kids, prohibit AI-generated “made for kids” content on the main platform, and kill the algorithm’s ability to recommend AI content to anyone under 18. YouTube’s spokesperson responded with the corporate equivalent of “we’re working on it.”

Here’s my problem with the framing from the advocacy groups, and I say this as someone who has covered The Sloppening extensively and takes it seriously: asking YouTube to cut AI slop from its kids’ platform is like asking your drug dealer to stop spiking your black tar heroin with fentanyl and cayenne pepper. You’re still on heroin! YouTube is the attention drug. The algorithm’s entire business model is maximizing watch time. AI slop is better at maximizing watch time than human-produced content because it can be produced infinitely, tested infinitely, and optimized infinitely. But the product that delivers the hit is the culprit.

YouTube will make some concessions here, the same way it always does. There might be a new label or toggle or something. But the structural incentive is misaligned in a way that no Jonathan Haidt podcasting tour can fix. The real solution is parental intervention and platform alternatives, not asking the ad-revenue machine to please make less money.

TASTEMAKER

Dark is the height of artistry. Since becoming a Dad, I’ve pretty much stopped watching TV. Too little time, too many boogers to wipe, etc. Everyone needs a little bit of me time though—so to get it I’ve been waking up at 4:30 in the morning and riding the Peloton in the dark for an hour. Appropriately, I started to watch the Netflix show Dark as I rode. The show is incredible. When I talk about how you should fill your life with anti-slop, high-quality artistry—a bar that television rarely clears—Dark is what I mean. The show is built off a series of scientific paradoxes where you are questioning the nature of time and reality itself. The plot is intricate, the cast dynamite. You want color theory? They got color theory. You want a lighting director who went unbelievably hard? You got that too. You can just watch it, but man, the more attention you pay, the more the show rewards you.

As a fun bonus, the show has the single greatest website ever made to accompany a television show. It adjusts the explanations of the show based on what episode you are on. Plus, there are a million subtle design details that I had fun nerding out on. I recognize Netflix doesn’t really do quality programming like this anymore, but I wish they did.

(If you respond to this email with spoilers past season one, I will hunt you down and spit on your shoe, I have at least one more month of crack-ass-of-dawn wakeups before I get to finish this thing.)

Chris Fleming is unreasonably funny. I had never heard of the flamboyant dressed comedian before. But my wife and I flipped on his special last night anyway. Within minutes, we were falling off the couch, choking on our sushi rolls, chortling at the man in the purple velour jumpsuit prancing around the stage. His style somehow combines incredibly wry observational humor with, and I promise I’m not making it up, interpretive dance. This sounds stupid. I know. But it really, really works. For a sample of his style watch this joke about how “there are snacks at Trader Joes that only women can see.” His full special is available on HBO.

Go and be kind this week,

Evan

Sponsorships

We are now accepting sponsors for the Q2 ‘26. If you are interested in reaching my audience of 34K+ founders, investors, and senior tech executives, send me an email at team@gettheleverage.com.