What a Week in AI

The Weekend Leverage, March 1st

In sci-fi novels about AI, the acceleration is unevenly distributed. Rapid accelerations contrasted against painful declines. That is where I am this week. I have never been more excited about the future of technology. My life has significantly improved in the last six months thanks to these tools—I am healthier, happier, richer. Simultaneously, the largest story in AI is about the United States government attempting to euthanize one of our best AI companies. And the largest story in the world is the ongoing war in Iran, also instigated by the United States government, potentially using the technology of the company they are attempting to murder to help plan their war. Terrible potential and wonderful promise all at once.

This week we’ll dive into how that manifests in layoffs, in ads, and in fundraising.

But first, a message from a new sponsor to the newsletter, Crusoe.

Every AI company pays an invisible tax. Not in dollars—in engineering hours spent wrestling with inference infrastructure instead of building product. You fine-tune a model, it works beautifully in testing, then you hit production and suddenly you’re debugging GPU clusters at 2am instead of shipping features.

Crusoe Managed Inference eliminates that tax.

Bring your fine-tuned model, and their team works with you to deploy it on a platform built for performance—5x more throughput than standard GPU clouds, breakthrough speed even as context windows grow (thanks to their MemoryAlloy technology), and the reliability production apps actually demand. You focus on building, they handle the infrastructure.

If you’re stuck in prototype purgatory, this is worth a look.

MY RESEARCH

Can ServiceNow become an AI winner? ServiceNow’s stock has been cut in half to ~24x forward earnings despite strong fundamentals, creating either a historic buying opportunity or a value trap. The bull case rests on ServiceNow’s unique position as an enterprise context layer. The bear case counters that foundation model providers like OpenAI are building competing context layers from inference rather than structured data, consumption pricing will erode revenue predictability, and shadow AI adoption may undermine the top-down governance model ServiceNow is betting on. My argument is that this company is a litmus test for the broader SaaS sector. Does AI make workflow platforms more valuable as orchestrators, or render them unnecessary entirely? Read here.

WHAT MATTERED THIS WEEK?

BIG TECH

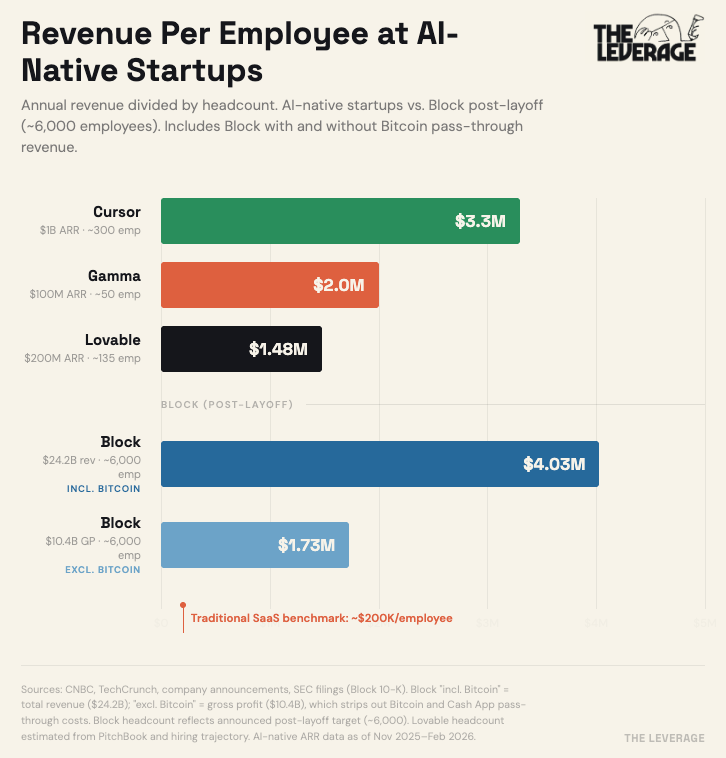

Block lays off 40% of its staff. This is one of the largest layoffs as a percentage of employees that I can ever remember from a tech company. The announcement by Jack Dorsey, mostly framed the choice as needing to work in new ways with AI. On X, most of the criticism was on his management and staffing policies. Both are likely true. Now though, this cut will put them right in line with the revenue per employee that you see at leading AI startups today.

Block reported $24.2 billion in revenue last year, but nearly half of that is Bitcoin flowing through Cash App — when a user buys $1,000 in Bitcoin, Block books the full $1,000 as revenue even though it only pockets a small fee. Strip out those pass-through transactions and you’re left with $10.4 billion in gross profit, which is the number that actually reflects what Block earns.

That’s why the chart shows both: at face value, post-layoff Block looks like it matches the AI-native startups at $4M per employee, but on the money they actually keep, it’s $1.73 million.

I am in agreement with Dorsey—you do need dramatically fewer people to run a company now. AI agents have crossed the rubicon, and we need new ways of staffing and team design to match this reality. I’ll talk more about that in an essay in the next few days. But this cut is the first of many to come from tech companies.

BIG LABS

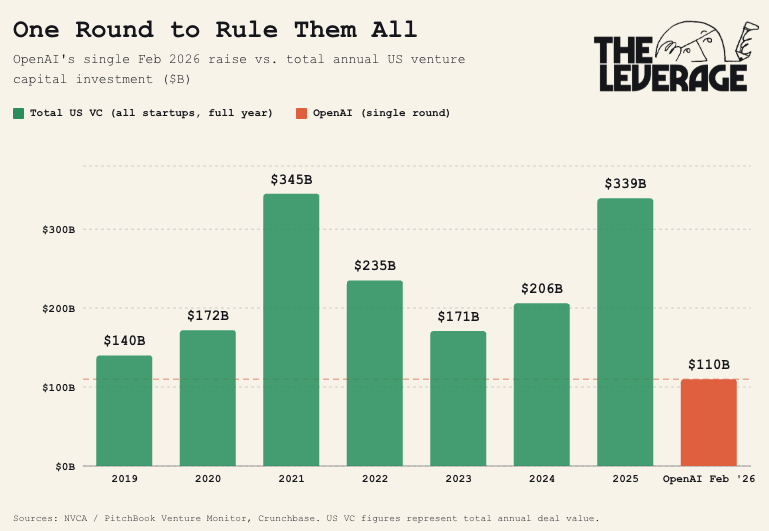

OpenAI speaks softly and carries a big check. The non-profit for-profit announced a $110 billion dollar round this week, backed by essentially everyone you’ve ever heard of. Amazon and Nvidia were notable partners kicking in a cool $50B and $30B respectively, with SoftBank rounding it out at $30B. To put that in perspective, this single round is roughly a third of all US venture capital deployed across every startup in America in 2025 ($339B), and it eclipses total annual VC investment in every pre-pandemic year on record. Add in the $40B raise from March 2025, and OpenAI alone has absorbed ~$150B in 12 months — more than every VC in America invested in the entire year of 2024.

Checks of this size always come with asterisks. Much of the Nvidia and Amazon capital is likely self-dealing revenue that goes right into chips for the AI to train on. Amazon’s clauses in particular are fascinating; only $15B of its $50B hits immediately. The remaining $35B is contingent on OpenAI either achieving artificial general intelligence or completing a US IPO. Apparently, building God or going public are considered equally difficult events in today’s capital environment.

Anthropic pokes the bear. As I’ve been arguing for the last few months, AI is an inherently political technology. This matters because politics, particularly the variety practiced by the current administration, is a blood sport dictated by perception, ego, and honor. In comparison with the relatively cool and dispassionate world of computers, the game is played entirely differently.

Anthropic learned that the hard way this week. It had a $200 million contract for the Department of War to use its Claude models. In the contract, there were two redlines. First, that Claude would not be used for domestic surveillance of Americans and second, that it wouldn’t be used to power autonomous weapons. The Pentagon wanted to be able to implement “all lawful uses.” They could not come to a compromise.

The miscalculation by Anthropic was to think that this was an administration that would be above revenge. They distinctly are not. Initially, Trump banned the federal government from using Claude (totally fine, it’s a free market), Pete Hegseth escalated by designating Anthropic as “supply chain risk.” This is a status that has never been applied to American companies before and would ban any contractor for the Department of War from doing business with Anthropic.

Since that includes every major cloud provider, this order could essentially obliterate Anthropic from the face of the earth. Frankly, I doubt this will legally hold up. Supply chain risk designation typically has to go through the Federal Acquisition Security Council, a 7 member body for departments that deliberate on this sort of status. There is some precedent that the Department of War can ban vendors from using Anthropic in defense related services, but there is almost certainly no legal precedent for them to try to ban the entirety of commercial activity.

What this actually reminds me of is the TikTok deal. There, the Trump administration promised broad punishment of a company they disliked. After years of legal battles, the end result was mostly Trump allies getting assets on the cheap. This situation rhymes with that. OpenAI announced on Saturday that they had accepted a contract from the Department of War. And in a happy coincidence, Greg Brockman, a co-founder and President of OpenAI, happens to have just donated $75 million to Trump. Weird how that happens. The major beneficiaries of the TikTok deal also happened to be Trump donors.

Again, I am not a politics writer and wouldn’t pretend to be. Why I bring this up is that politics is business strategy now. If you are a founder, you will have no choice but to think about this stuff because AI regulation is coming for you, no matter who is in charge. Bernie Sanders wants to put a moratorium on building data centers. Ron Desantis is going to be running on an anti-AI populist vote. And multiple Democrats are promising to break up big tech companies once they are back in power. As painful as it is, you have to think about this stuff now.

I will reserve judgement on whether the OpenAI deal is safer than the Anthropic proposal—as I am writing this on Saturday night the OpenAI team is doing damage control on X and I want to give it some time for it all to settle. Still, I think every reasonable person can agree that the supply chain risk designation is not the way Americans do business.

THE SLOPPENING

The AI feature trap. Koah, a startup building “AdSense for AI,” just raised $20.5 million in a Series A. I spoke with co-founder Nic Baird this week. He told me they now estimate there are roughly 46,000 AI apps in the world—up from 33,000 a year ago. This is the fastest expansion of a software category in history, and almost nobody can afford to use it properly.

With his customers, he will see major applications with millions of users launching AI feature pilots, seeing insane engagement, and then hitting a wall. Inference costs take a product from hundredths of a cent per user per year to two to three dollars. That’s a 10,000x increase in cost-to-serve.

The Sloppening is driven by twisting human nature to keep users engaged. Sometimes that’s ad-driven, sometimes it’s subscription-driven. My perspective is that the business model isn’t the disease; its the optimization target. So the question with AI ads is what are they optimizing for?

Right now, the answer is encouraging. AI ads incentivize AI apps to have increased utility and increased engagement. If you’re really using AI with agents, it’s easy to spend hundreds or thousands a month on tokens. The most powerful tools ever built are only accessible to the affluent. Everyone else gets nada. Ads have a way of bridging that gap that I think is noble. As Baird told me: “It would be a tragedy to see one of the most powerful tools we’ve ever created be unavailable to a huge part of the population because we don’t want to do ads.” I remain concerned about the engagement maximization/incentives, but that is a result of the internet and human nature. It isn’t unique to AI.

TASTEMAKER

“I now understand the need for faith—pure, blind, fly-in-the-face-of-reason faith—as a small life preserver in the wild and endless sea of a universe ruled by unfeeling laws and totally indifferent to the small, reasoning beings that inhabit it.” Dan Simmons, Hyperion.

Dan Simmons passed away this week at 77. The author wrote one of the greatest sci-fi novels ever in Hyperion. The first time I read this book, I hooted like a loon. I ran around my bedroom cackling like a maniac. I cried at the loneliness and aching contained in the prose. While most sci-fi today overly focuses on sick space battles bro, Simmons understood the crucial thing about genre fiction—fantastical settings are about giving us more freedom to explore the human condition. RIP to a legend. Read Hyperion this week if you haven’t yet, I’ll be rereading it with all of you too.

Go and be kind this week,

Evan

Sponsorships

We are now accepting sponsors for Q1 ‘26. If you are interested in reaching my audience of 34K+ founders, investors, and senior tech executives, send me an email at team@gettheleverage.com.