Ending the Quarter With A Bang

The Weekend Leverage, March 29th

We are at the end of one of the wildest, most volatile three-month periods I can think of in technology’s history. I don’t smoke, but after reviewing the news this week, I felt an urge to light a cigarette.

So today, I wanted to look back at the deep currents of the last 3 months and tie those into some recent headlines. The world is changing so fast, so consistently, that if you don’t pay attention the important stuff will whip right by.

We’ll get to that, but first, this newsletter is brought to you by returning sponsor Crusoe.

Multi-agent AI has an infrastructure problem.

Every agent interaction generates up to 15x more tokens than a standard chat. Context windows are exploding, exponentially multiplying compute costs. Most inference platforms weren’t built for this. Crusoe Managed Inference was.

As a Day 1 launch partner for NVIDIA’s Nemotron 3 Super—the new open model with a 1-million-token context window and 5x higher throughput for agentic workloads—Crusoe is positioning itself as the inference layer for the agent era.

Bring your own fine-tuned model and their team handles deployment, optimization, and the infrastructure headaches that keep your engineers from building product. The agent era needs a new inference stack. Crusoe is building it.

MY RESEARCH

How can you make sure your career survives the AI transition? The prompt engineer went from a six-figure dream job to obsolete in eighteen months. What happened? The mistake people are making is chasing AI-native job titles that have a shelf life of a few months. The real strategy is becoming what I call a plus-shaped person where you retain your expertise, but use AI to thicken the horizontal bar until you’re genuinely skilled across adjacent functions, not just passingly familiar. You become operationally dense, a bundle that’s hard to unbundle. I break down exactly how to build that stack and why the window to do it is closing fast. Read here.

WHAT MATTERED THIS WEEK?

BIG MONOPOLY

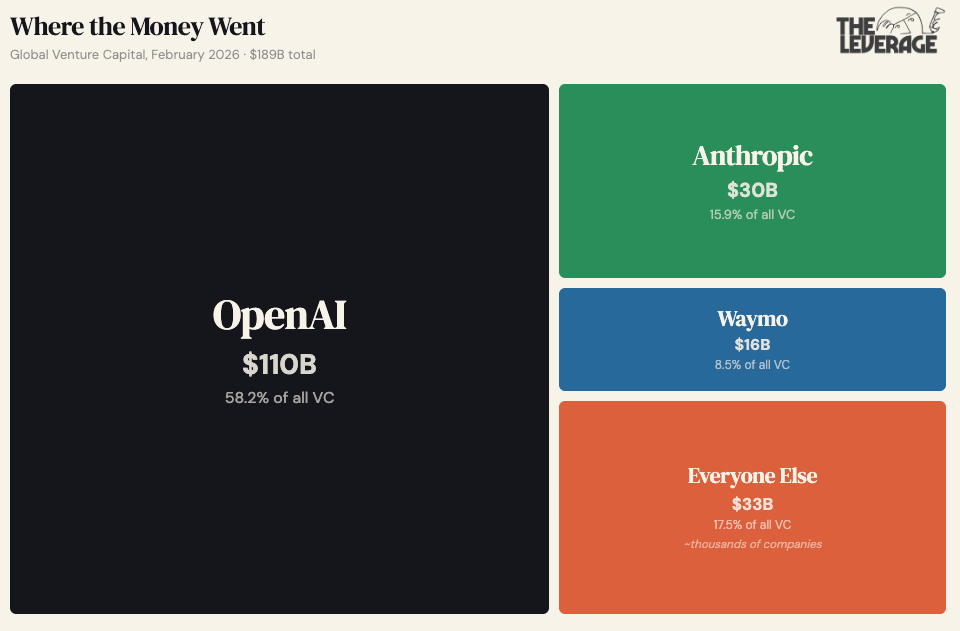

We are very, very, very concentrated in AI right now: The largest companies have raised such staggering amounts of cash that it is easy to grow numb to it all. Rather than just rattle off some round sizes, let’s try to get a little more rigorous with it.

The Herfindahl-Hirschman Index is how the Department of Justice measures market concentration. Under the current guidelines, an HHI below 1,000 is “unconcentrated.” Between 1,000 and 1,800 is “moderately concentrated.” Above 1,800 is “highly concentrated” and triggers antitrust scrutiny.

Nobody has calculated the HHI for venture capital by recipient company. Thank goodness you subscribe to this newsletter so we can solve that together.

In February 2026, global VC totaled $189B. Three companies raised $156B of that: OpenAI ($110B), Anthropic ($30B), Waymo ($16B). The remaining $33B was spread across thousands of companies. To calculate the HHI, you square each company’s market share and sum them. Those three companies alone contribute 3,712 HHI points. Add the remaining ~$33B — even if perfectly distributed across 1,000 companies, each contributing negligibly — and February 2026’s venture capital HHI lands around 3,700. That’s more than double the DOJ’s 1,800-point threshold for “highly concentrated.” It’s not close to the line. It obliterates the line.

The AI venture capital market, by DOJ standards, is more concentrated than any sector the government has ever investigated for antitrust. There are lots of caveats my critics could add here—these aren’t really “venture capital” rounds or something like that. While technically accurate, this misses the spirit of my comments here. However you define the boundaries of this market, there is no denying we, as an industry, are betting it all on these companies.

Without OpenAI-scale deals, the monthly numbers look ordinary. US funding dropped to ~$13B in March. It wasn’t like startups quit happening. It was just that the three companies that were the venture market just stopped raising for a month.

AI startups captured 41% of all venture dollars on Carta in 2025. By February 2026, that figure sits at 90%. The venture industry has become a concentrated bet on three companies with returns that exist on paper, denominated in marks that depend on future mega-rounds continuing. If either Anthropic or OpenAI fails, it will send a cascading series of catastrophes through the entirety of the United States economy. Yikes!

BIG ROBOT

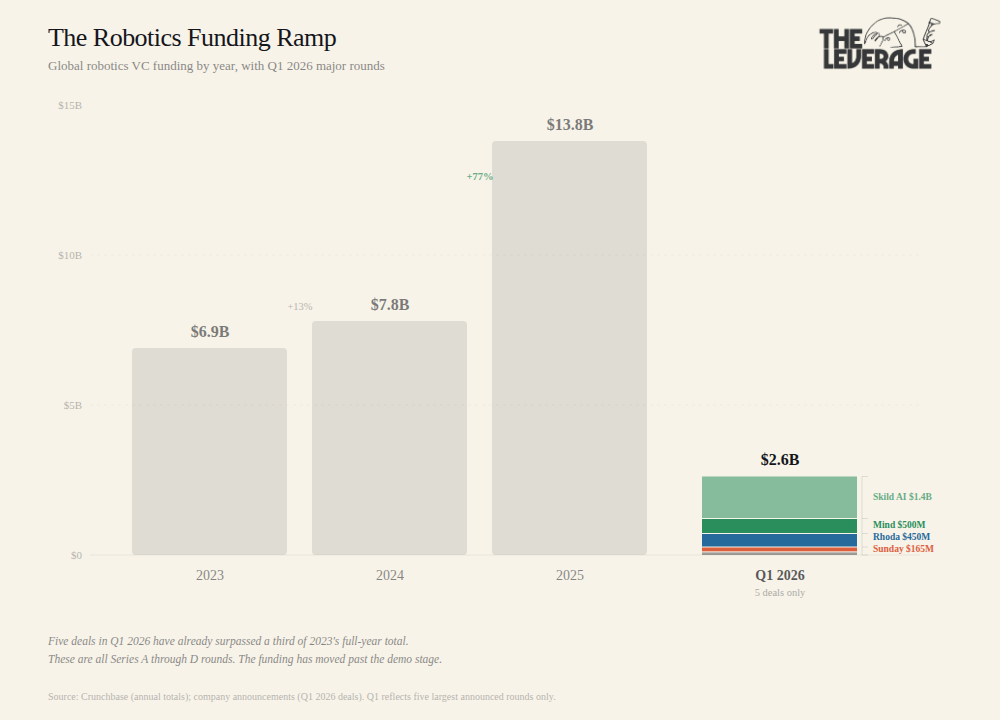

Four robotics companies raised a combined $1.2 billion in a single week in March. Mind Robotics, a Rivian spinout building industrial robots trained on factory data, pulled in $500 million in a Series A co-led by Accel and a16z. Rhoda AI emerged from 18 months of stealth with $450 million for its video-predictive robot intelligence platform. Sunday raised $165 million at a $1.15 billion valuation for a household humanoid called Memo. And Oxa, a UK-based autonomous logistics company already deploying with DHL and bp, closed $103 million in its Series D.

Add Skild AI’s $1.4 billion from January (Series C, led by SoftBank, $14 billion valuation) and you get $2.6 billion across five deals in a single quarter. That is just from the headline rounds! I’ve heard rumors of similar deals, not yet announced.

That number deserves context. In 2023, total robotics VC was $6.9 billion globally, per Crunchbase. In 2024, $7.8 billion. Then 2025 nearly doubled the prior year to $13.8 billion. Five deals in Q1 2026 have already cleared more than a third of what the entire sector raised in 2023.

More important than the volume is how the composition of the funding is shifting. Seed and Series A rounds are meant to fund R&D where founders make science and sick demos. The rounds closing now are Series B through D. Sunday’s CEO said it plainly when he announced their raise: “We raised our Series B to stop giving demos.” The money is moving towards “how fast can we ship it?”

I modeled the unit economics of humanoid robots in “A Robot Walks Into a Coffee Shop“ last year. The crossover point where a robot becomes cheaper than a minimum-wage worker landed around $20-30 per task-hour for early deployments, dropping toward $8-12 at scale with declining hardware costs and increasing utilization rates. Sunday’s raise implies they think household economics are within reach in 3-5 years, which means their internal cost models for Memo probably target sub-$15 per task-hour at scale. If they can produce a robot that reliably folds laundry and clears tables for $10-12/hour of operation, the TAM is every dual-income household in the developed world. You should view these later stage rounds as the market indicating there is a very real chance of these numbers being achieved in the next five years.

THE SLOPPENING

Wikipedia banned AI-generated text, and the real story isn’t ideological. Everyone’s favorite way to cheat on their homework banned AI writing for their articles in a 40 to 2 vote. There are, of course, ideological concerns around LLMs, but the real problem is economic.

Generating an AI article takes about 30 seconds. Verifying one takes a Wikipedia editor 4 to 6 hours of checking citations, verifying studies, etc. Call that a 500:1 ratio. For every unit of effort to create AI content, it takes 500 units of effort to confirm it’s true.

The trigger was a bot called TomWikiAssist that autonomously authored and edited multiple articles in early March. One agent, running 24 hours a day, generated content faster than the entire English Wikipedia editor community could review it. The human editors were on the wrong side of an AI exponential.

I started to wonder how pervasive this problem is globally. Two weeks ago, I talked about how the WSJ Opinion page was publishing AI slop, and so too was basically every media company in America. Has AI-generated content taken over the entire internet already and we just didn’t notice? I think yes.

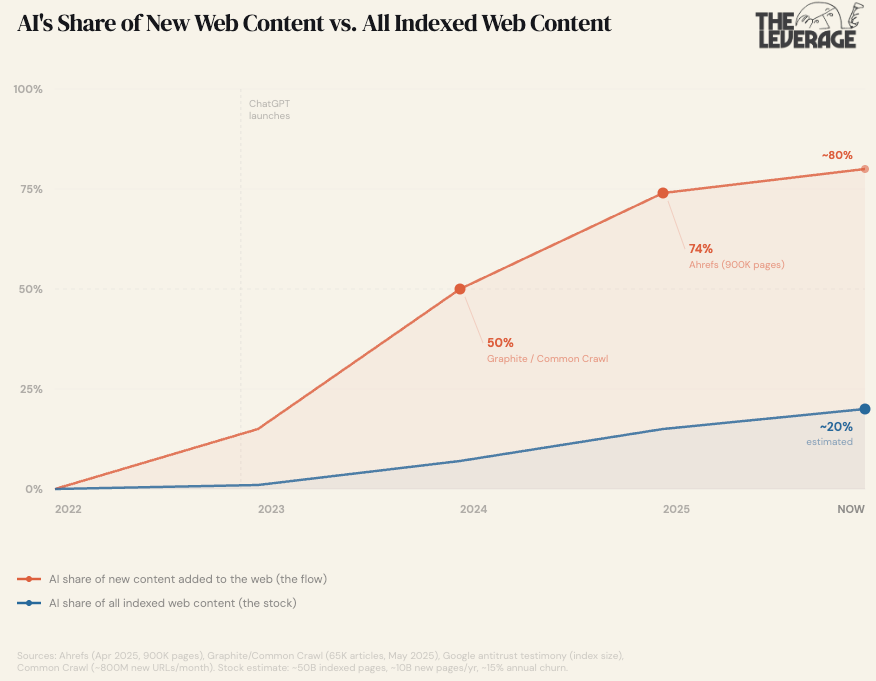

There has been surprisingly little academic research on this topic, so we are stuck pulling together a variety of different data points. I wanted to distinguish between the flow (new content being added to the web) and the stock (everything that already exists). For the flow, I used two large-scale studies: Graphite’s analysis of 65,000 articles crossing 50% by late 2024, and Ahrefs’ study of 900,000 new pages from April 2025 finding 74% contained at least some AI-generated content. For the stock, I estimated from Google’s indexed web (~50 billion pages per antitrust testimony and industry estimates), Common Crawl’s monthly crawl data (~800 million new unique URLs per month, or roughly 10 billion per year), and a conservative ~15% annual churn rate for deindexed and expired pages. To be clear, this is a guesstimate, but it feels reasonable to assume something radical.

AI has already taken over the flow of new content. The stock of accumulated human writing is the only thing keeping the internet majority-human, and it’s being diluted every month.

The natural follow-up question is if this change is a bad thing? After all, the media we consume shapes who we are and how we think. If all you watch is 9/11 conspiracy videos, eventually your number one thought will be “jet fuel doesn’t melt steel beams” or something. So if AI is helping to write at least 80% of the new content we are consuming, what happens?

Dan Williams argues that LLMs are fundamentally a technocratizing technology. Where social media democratized information by shifting power from expert gatekeepers to ordinary people (with all the conspiracy theories and populism that came with it), LLMs push the other direction. They converge users toward expert consensus. Ask Claude, ChatGPT, Gemini, or even Grok about vaccines, climate change, or trade policy and you’ll get broadly similar, evidence-based, expert-aligned answers. Dylan Matthews calls this “epistemic convergence” and draws an explicit analogy to network television in the 1960s, when three channels gave 90% of Americans the same nightly news.

In many cases, this is probably good. If LLMs pull millions of people away from conspiracy theories and toward peer-reviewed evidence, that’s a win. In this newsletter, we’ve previously discussed research showing LLMs can correct conspiratorial beliefs that most people assumed were beyond the reach of rational persuasion. So, if you think social media’s epistemic fragmentation is a crisis (and I do, mostly), LLMs are the closest thing we have to a corrective. Still, convergence toward a consensus is only as good as the consensus itself. And in the most important topics, there is often very little consensus to begin with.

That’s the Wikipedia problem. An LLM writing a Wikipedia article doesn’t just risk fabricating a citation. The topics where Wikipedia is most valuable are precisely the ones where the mean is most dangerous. Having an opinionated editor whose incentives are to maximize truth is extremely valuable because “experts” often have incentives that are not oriented towards truth-maximization. In cases like WMDs in Iraq, Covid denialism, (insert world crisis here), the correct view was held by a minority who pushed against the mainstream. Wikipedia’s editorial model, at its best, gives those minority views room through human editors who know enough to flag what the consensus is missing.

I’m not even saying AI content is worse than human content! I’m saying it’s wrong differently. Human editors are wrong in diverse, idiosyncratic ways. One editor gets an issue wrong; another gets it right. The errors are uncorrelated, which means the system self-corrects over time as editors argue with each other. AI is wrong in consensus ways, biased by its methods of training.

The Sloppening is about understanding how human thought and attention are reshaped by the economics of the internet. Expect to see repeats of this Wikipedia story in every single field where human judgement is the key financial bottleneck.

TASTEMAKER

Aussie punk rage: On a recent Tuesday afternoon, I showed up at my local gym, seemingly interrupting a testosterone convention. There were multiple 405-lb deadlifts happening, accompanied by a chorus of grunting dudes doing 315-lb bench presses for reps. Every jacked guy within 10 miles of Boston was in the weightroom with me that day. I knew that to not shame my family, I would need to lift some heavy-ass weights. To make that possible, I dug deep into my lifting playlists, and pulled out something powerful.

Amyl and The Sniffers is a punk band from Australia that is equal parts cheeky and pissed off. In an interview, their lead singer said the name comes from Australian slang for poppers — “you sniff it, it lasts for 30 seconds and then you have a headache — and that’s what we’re like!” They have a pulsating, pounding energy that makes you want to run through a wall. For my heaviest sets, I played their 2021 song Hertz. Use with caution, side effects include sweat, anger, rage, and a desire to grow a mullet. The missus and I are looking forward to moshing in the pit at their show in Boston this summer.

Go and be kind this week,

Evan

Sponsorships

We are now accepting sponsors for the Q2 ‘26. If you are interested in reaching my audience of 34K+ founders, investors, and senior tech executives, send me an email at team@gettheleverage.com.

so LLMs leaning towards 'experts' is EXACTLY what we've been warned to avoid. critical thinking is at all time low and going lower