The Middle Gets Eaten

What AI is actually doing to engineering teams.

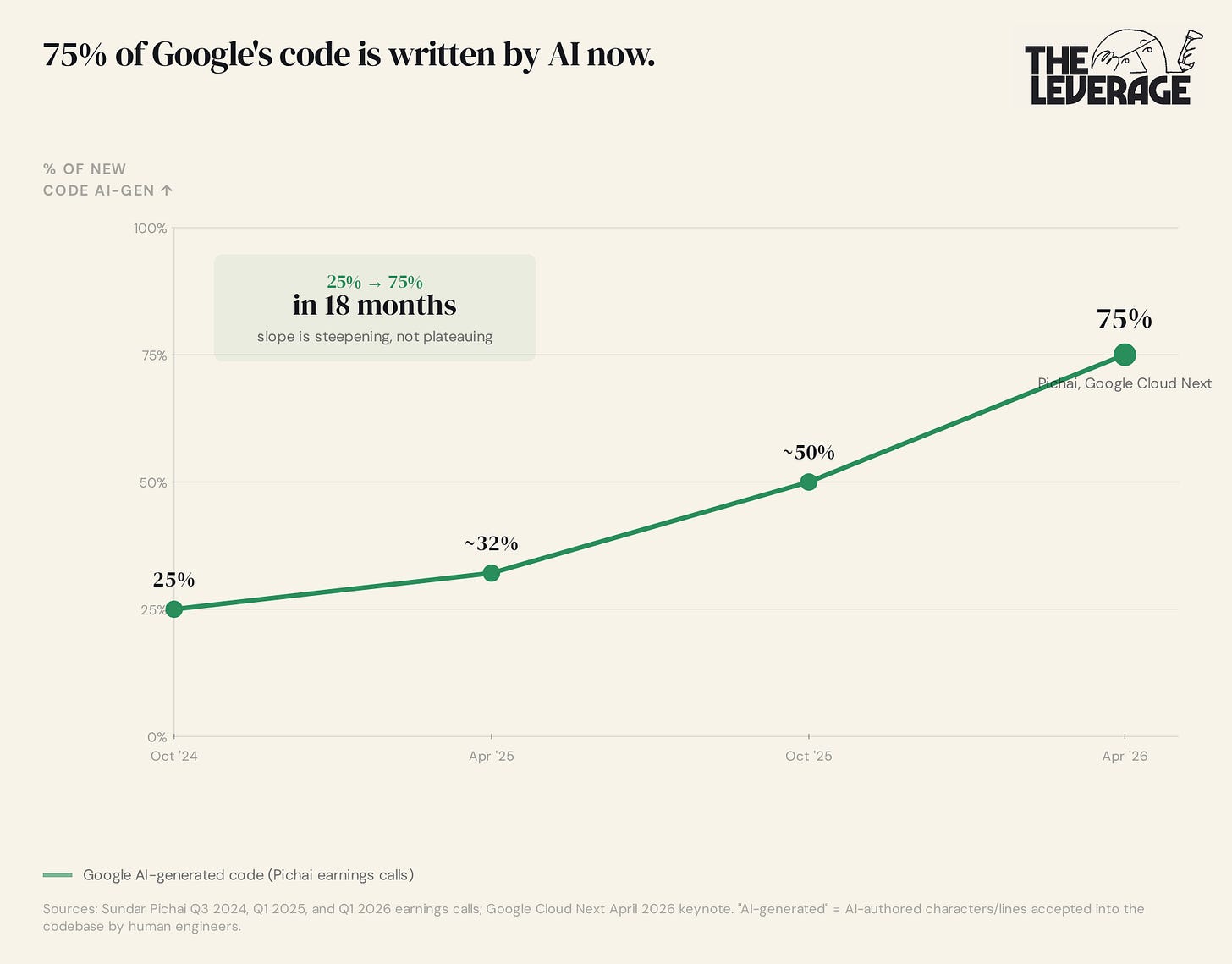

On April 15, Snap fired 1,000 engineers, roughly 16% of its workforce, and disclosed in an SEC filing that 65% of new code at the company is now AI-generated.

A week later, Sundar Pichai posted that 75% of new code at Google is AI-generated, up from 25% in late 2024. At that rate it hits 100% before the end of 2027.

It is tempting to gesticulate wildly at these data points, yell about how we are in the end times for computer jobs, and then pout ferociously.

However, the aggregate hiring data makes this supposedly bleak future more nuanced. Engineering job postings hit 67,000 globally in March 2026, the highest in years, while product management postings were the highest in three years. And yet somehow, underneath the aggregate, entry-level developer postings dropped 67% between 2023 and 2024, and employment for software developers aged 22 to 25 has fallen roughly 20% from its late-2022 peak. And as I discussed two weeks ago, designer job openings have flatlined in the last year or so.

What the hell is happening here? How can senior jobs, aka the most expensive type of hire in existence, be increasing in openings? While junior jobs, aka zitty 21-year-olds you pay with oatmeal gruel and Zyns, are declining? How can designers be going away while everyone else on their team is getting more headcount?

The short answer is that AI is transferring capabilities up and down the org chart, hollowing out the middle of every team in the process. But let me give you the longer, yet more useful answer in two ways:

Point out the historical parallels to when work has changed this dramatically before

What you, as an operator or founder can actually do to prepare for this dangerous, exciting, frightening future.

Why this has happened before

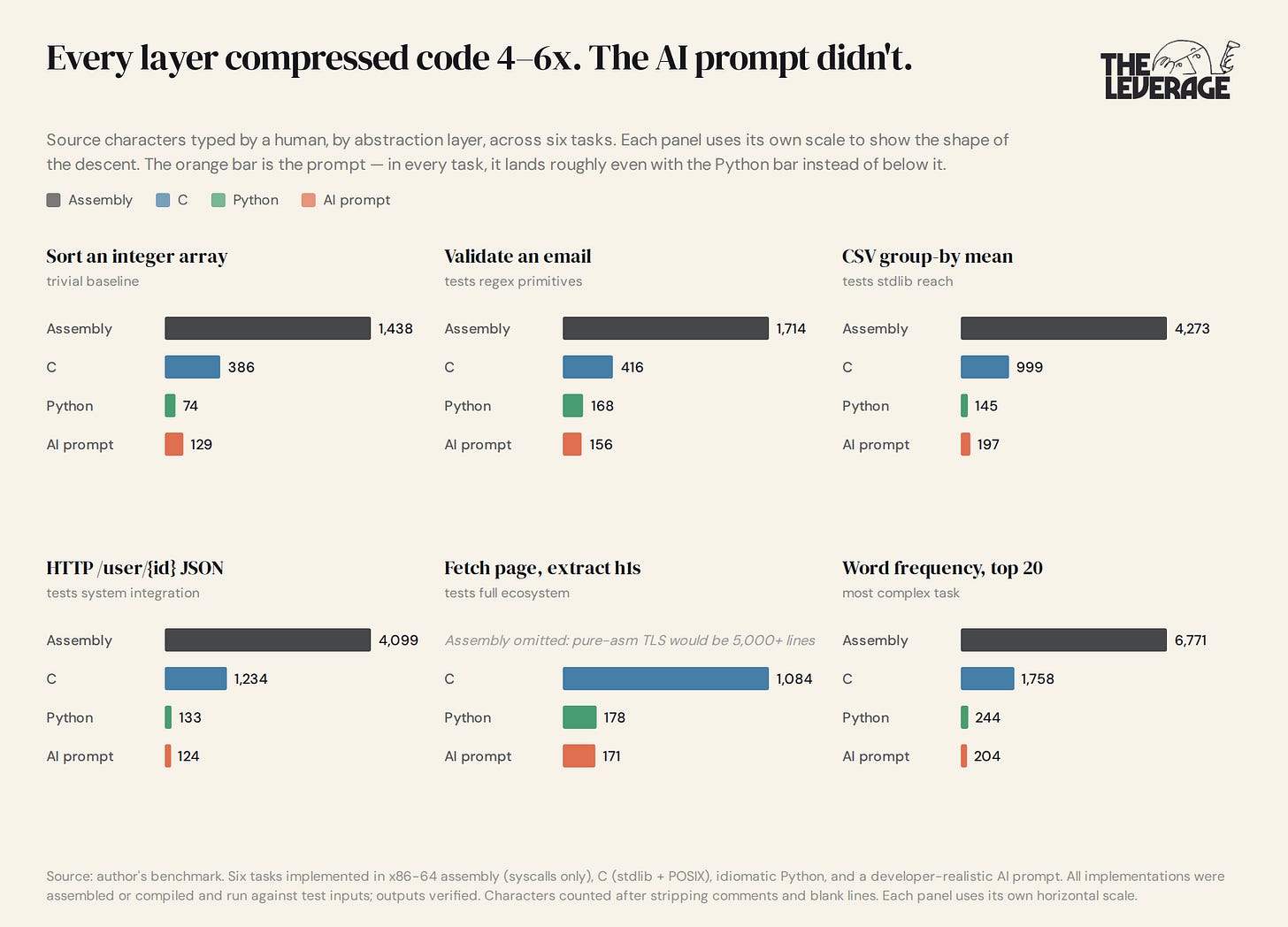

In 1973, Dennis Ritchie and Ken Thompson rewrote most of the Unix kernel in C. The heralded assembly programmers around them at Bell Labs understood the implications immediately. However, the good folks at IBM insisted that nobody would ship serious software in C, that the performance penalty was unacceptable, that real systems work required hand-tuned assembly. They were craftsmen. They had taste.

And, in their defense, they were technically correct on every individual point. They were also about to be unemployed. The abstraction layer above them had just compressed the act of writing software by an order of magnitude, and the labor market was not going to keep paying for the old layer at the old rates.

This is not a one-time event. It has happened many times throughout the history of computing. Each layer of the stack exists because the layer below was too tedious for humans to write directly.

I wanted to understand if AI was a continuation of this story. So me and my best friend Claude Code built a benchmark to find out. I designed six tasks with four types of implementation— assembly, C, Python, and the prompt you’d actually type into Cursor to get this on the first try. From there, I made sure everything compiled, ran, and produced verified output. Then I counted the characters a human had to type at each layer.

The results are far more…complicated than you might think. AI is not a clean continuation of new abstraction layers making coding shorter and more powerful.

I had assumed AI prompts would compress Python by 3 to 10x, the same order of magnitude as the previous transitions, and that the labor argument would follow naturally from the compression argument. The data does not support that. AI prompts are not a 3-to-10x compressor of human-typed source. On this benchmark, they are roughly a 1x compressor aka the exact same length.

Once I sat with that result, it got stranger. In several of my six tasks, the prompts were actually longer than the Python they produced. Every detail I omitted, the AI guessed wrong on, which forced me to either pre-specify or burn turns correcting. The compression that prompts can deliver is mostly compression of implementation choices, not characters. Python is already extraordinarily close to plain English. df.groupby(”category”)[”value”].mean() is not meaningfully shorter as a sentence than as code. Take the trade-off seriously and you end up with roughly one character of prompt per character of Python. Sometimes more.

While prompts aren’t more time efficient, they are more expertise efficient. You can give an AI agent a generic prompt, but it can then reference its memory and the context you give it, to figure out the details on its own. And most importantly, even the dolts in your organization, say a newsletter writer who can bench 225 for reps, are able to “write code” without actually writing any code. This is remarkably similar to how you could code theoretically code a videogame in assembly, but it is easier in C++.

The AI layer is not “the next 5x compressor.” It is something else. And the question is whether that something else is enough to drive the same labor-market restructuring that the previous transitions drove.

The theory

Compression ratios were never the actual mechanism by which abstraction layers reorganized labor. The C-to-Python transition did not reduce demand for C programmers because Python was 6x shorter. It reduced demand because Python made the average analyst capable of producing working code that previously required a specialist. The keystrokes saved were downstream of that capability transfer, not the cause of it.

The AI layer does the same thing. It does not need to compress characters to reorganize the function below it, because characters were never what mattered. Capability transfer is what mattered. And capability transfer is exactly what a prompt does, even at 1x compression.

That reframing is what makes this fit into the historical sequence rather than break out of it. There is no historical case of an abstraction layer arriving without restructuring the labor of the function below it, and there is no historical case where the magnitude of that restructuring was predictable from a character count. The C programmers at IBM in 1975 were not measuring lines saved per ship-week. They were watching their juniors disappear and assuming the trend would stop above their pay grade. It did not.

I would argue that every knowledge workflow has something like this:

A brief writer who defines what should exist

A translator who turns the brief into a spec

A producer who executes the spec

A reviewer who catches errors

A judge who decides what ships

When an abstraction layer arrives, the brief writer gets empowered (they ship directly through prompts now), the translator and producer get eliminated (the brief goes straight to the AI, which is now the producer), and the reviewer and judge get promoted (their judgment becomes the constraint on output quality, and their compensation expands accordingly).

Take a software product team shipping a new feature. The product manager writes the PRD defining what should exist. The staff engineer or tech lead translates that PRD into architectural decisions, ticket breakdowns, and a technical spec. Mid-level engineers execute the spec by writing the code. Code reviewers and QA catch the bugs. The engineering lead decides what ships to production.

Now with tools like Cursor and Claude Code, the PM gets empowered to ship working features directly through natural language prompts, the tech lead translator and the mid-level engineer producer get yeeted out of a job because the PRD goes straight to the AI which produces both the architectural plan and the code, and the engineering lead gets promoted because their taste, security judgment, and ship/no-ship calls are now the only constraint between prompt and production. They now get paid fat stacks of comp.

The translator and producer roles are exactly what we used to call “junior” and “mid-level.” The reviewer and judge roles are exactly what we call “senior” and “specialist.” That is why the aggregate hiring data looks the way it does: senior hiring up because the constraint-layer roles are scarce and high-leverage, junior hiring is collapsing because the translator and producer roles are being absorbed by the abstraction layer, and design uniquely flatlined because its capabilities crossed the threshold first.

Keep reading with a 7-day free trial

Subscribe to The Leverage to keep reading this post and get 7 days of free access to the full post archives.