The Slop Factory Needs Line Inspectors

Some thoughts on design and the future of work

A designer used to be the monarch of software. They would opine on the correct shade of blue for buttons or the proper order of navigation tabs. All of the inputs were in their purview. Designers also asserted dominion over software’s outputs, stealing them from product managers and engineers. They took dashboards, tables, charts, pages, and every other visual artifact that software created in response to user inputs. Each designer, clad in $100 black t-shirts and quoting Rick Rubin, would act as the arbiter of taste for every screen in every application at every company. Everything was a design decision.

AI products are eliminating the designer from both sides simultaneously.

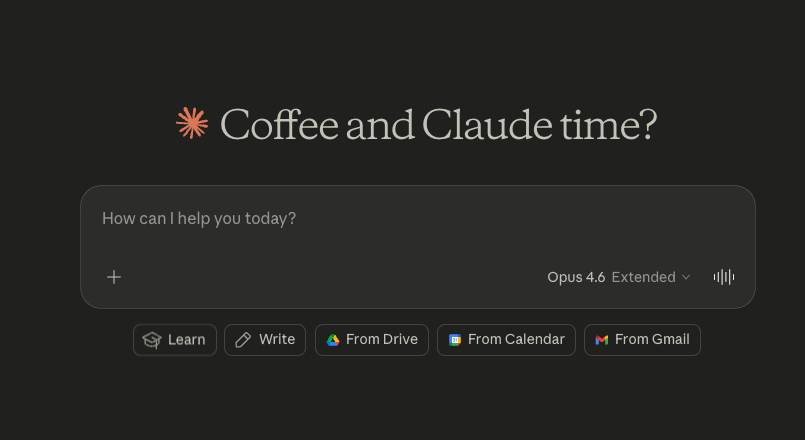

The input side has converged on a text box. You type or speak what you want. There is a single component that absorbs infinite intent. That side (seemingly) needs little design. It feels like every app on the planet now opens to a text box.

The output side is dynamically generated. The AI decides whether to respond with a paragraph, a chart, a table, a working application, or a piece of code. It makes that decision in real time, for this specific user, for this specific query. And once again, there is seemingly no designer required.

Text in, dynamic components out. Neither side of the equation requires the profession that has spent three decades working the space between them. This does not mean the output is always good. Sometimes a dashboard you can glance at is faster than asking a chatbot. But the trajectory is clear, an AI agent is being given the power that a designer used to have.

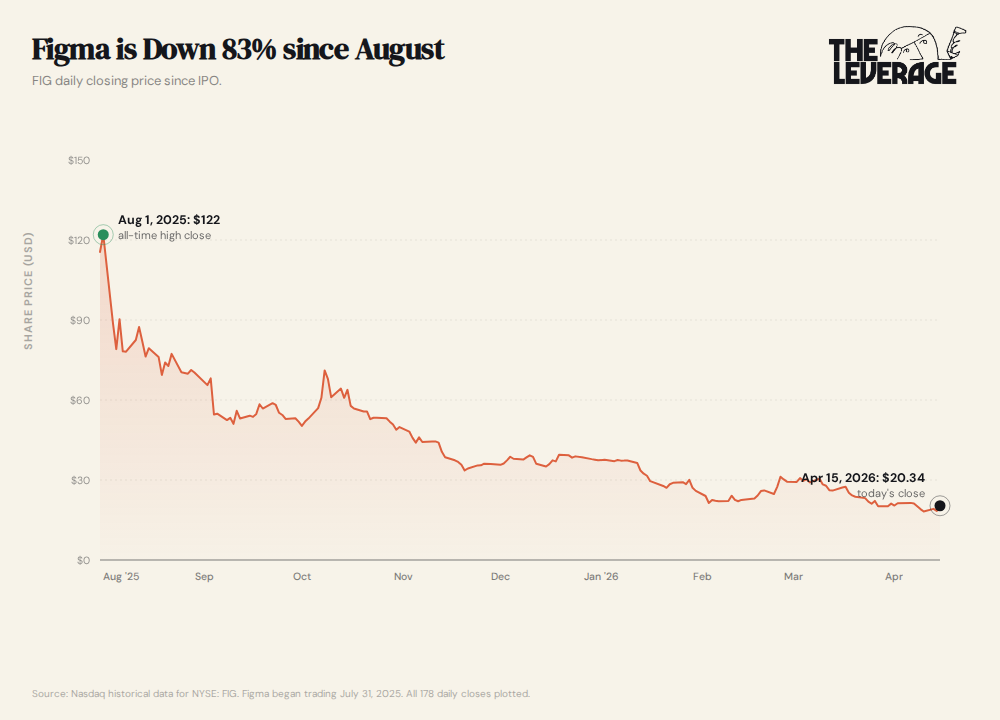

What is happening to design is the canary, not the coal mine. Design is the first creative knowledge profession where both the input and the output are being automated at the same time. This has resulted in a hollowing of the profession. It is an extinction level event for previous design workflows, as best shown by Figma’s nearly 85% collapse in stock price since August.

Other functions have been hit by AI, but the exposure pattern is different. Customer support got automated on both sides first, with chatbots handling the input and generating the output. But those tasks are transactional, not creative. The automation worked (at least partially) because most support interactions are pattern-matchable. Copywriting has AI on both sides too: briefs go in, drafts come out. But humans still own strategy, editing, and brand voice on the output side. Data analysis is closer to design’s predicament than most people realize, with natural language queries replacing the input and AI-generated charts replacing the output. But the analyst still owns the interpretation layer.

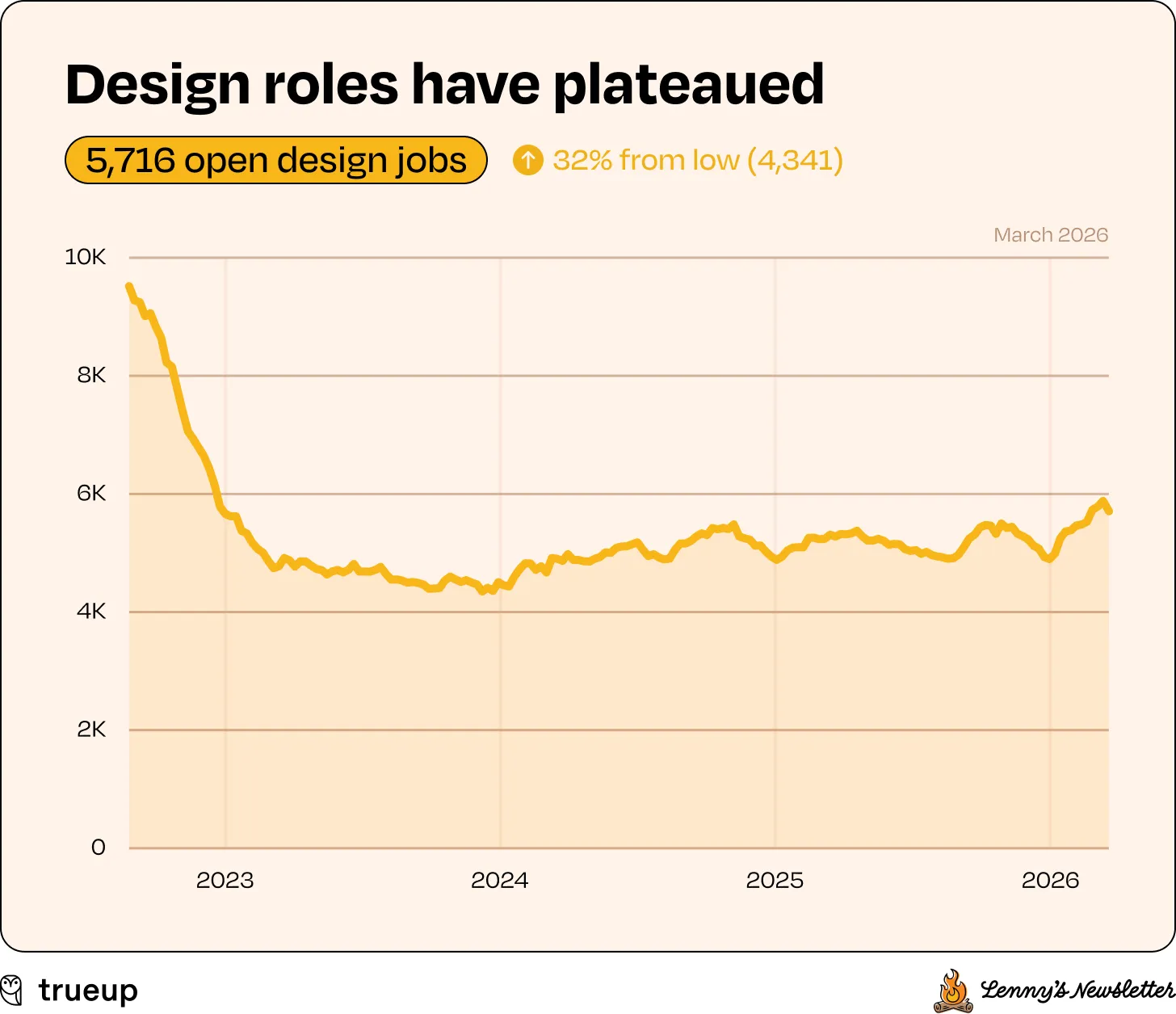

Design is the sharpest version of the AI job replacement theory problem because it was supposed to require taste, spatial reasoning, and aesthetic judgment. These were the skills we assumed AI could not replicate. When both sides of a workflow get automated, the job shrinks. That is what is happening to design right now, and the data is starting to reflect it.

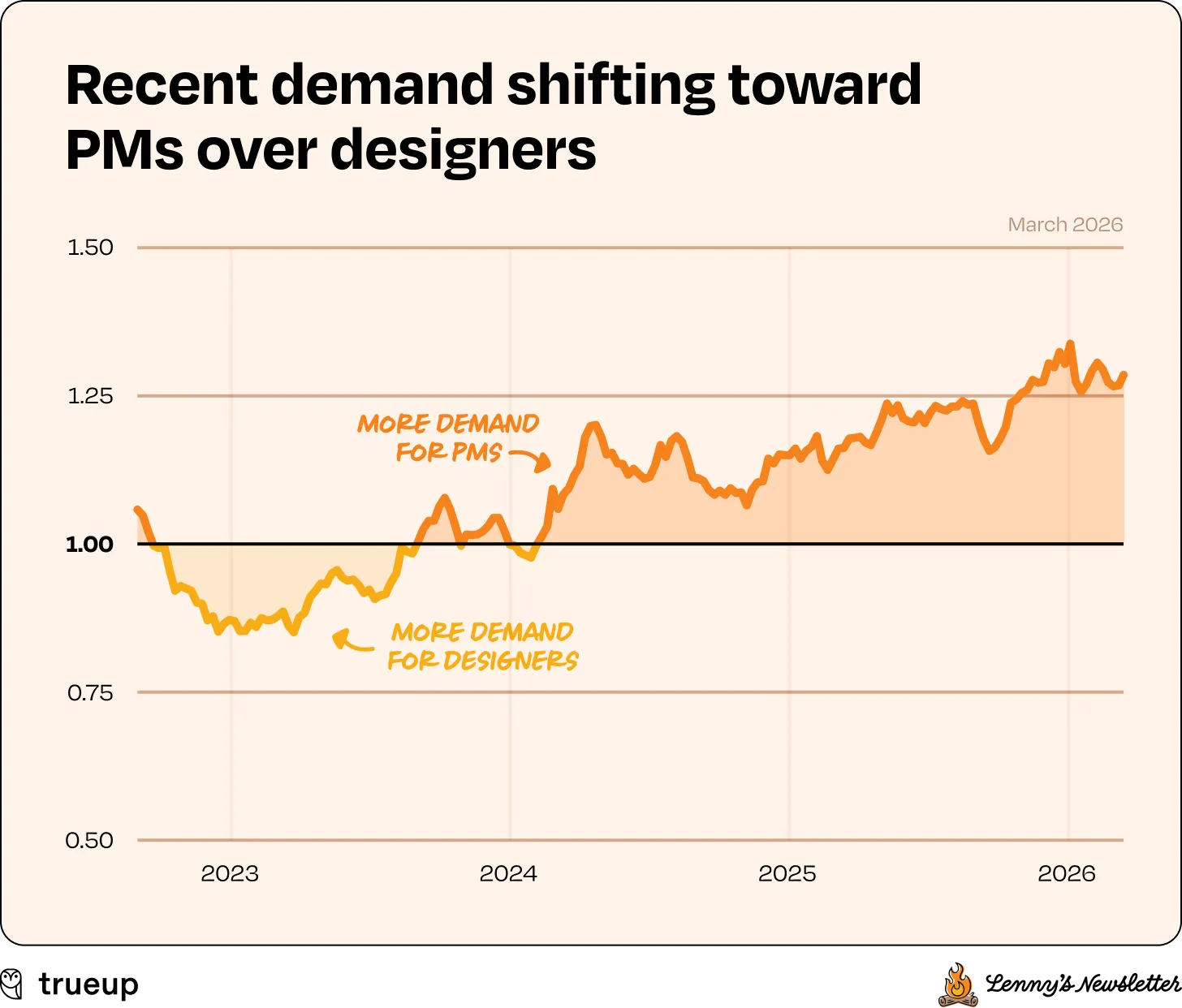

According to analysis done by friend of the publication Lenny Rachitsky, the number of open design roles has completely plateaued.

Simultaneously, engineering jobs and product management jobs are soaring upwards.

Since the tech crash of 2022, the data shows that two of the three recovered from the post-2022 tech layoff cycle, and one simply stopped mattering as much.

But the hiring data is only the first signal. The second is harder to explain and more analytically interesting: the design tool market is booming at the same time.

Figma crossed $1 billion in annual revenue in 2025, growing 41% year-over-year. The company is guiding to $1.37 billion for 2026. Its net dollar retention rate hit 136%, the highest in ten quarters. More than half of its paid customers spending over $100,000 in annual recurring revenue were using Figma Make, its AI generation feature, every week by Q4 2025. In March 2026, Figma began enforcing monthly AI credit limits and charging for consumption beyond them. The design tool is becoming an AI generation platform.

That tension is the story. Companies are spending more on design infrastructure while hiring fewer designers. The design tools are getting more valuable as the constraint layer even as the people who used to wield it are getting displaced. Figma’s revenue growth and the TrueUp hiring plateau are two sides of the same structural shift. We are going from designers creating individual screens to design systems governing AI-generated output.

Goldman Sachs Research recently identified graphic design as one of the specific industries where employment growth has already fallen below its pre-AI trend, alongside marketing consulting, office administration, and call centers. The World Economic Forum’s Future of Jobs 2025 report ranked “graphic designer” as the #11 fastest-declining job role heading toward 2030, a stark reversal from the previous report two years earlier, which categorized graphic design as a “moderately growing” profession. The Bureau of Labor Statistics projects graphic designer employment growth of just 2% over the next decade, well below the average for all occupations. And according to industry surveys cited by AIGA, 75% of designers now use AI tools, up from 35% in 2023, while 32% of design job listings now mention AI skills, up from 3% in 2023. The job description is changing faster than the headcount is being replaced.

The natural question is why. And the answer requires understanding what AI native design actually looks like, because the companies building it have made a set of interface choices that carry enormous implications for the design profession. Three paradigms have emerged. Only one of them is genuinely new.

Understanding which is which tells you everything about where design, and by extension work in general, is headed.

How Do You Manage What AI Makes?

When AI takes over more of the actual making, the remaining human job is governance. You spend your time guiding what the AI generates. You are the line inspector at the slop factory, making sure that everything being produced is edible. The interface you use to do that is not a design decision in the traditional sense. It is more akin to a deployment decision. You are making operational choices. To manage those operations, three paradigms have emerged, each reflecting a different answer to the question: how many AI outputs are you managing at once, and how much control do you need over each one?

Chat is for managing a single generation in real time. You prompt, the AI produces, you react, you refine. The true winners of the last few years built their businesses on the back of this paradigm with ChatGPT as the most prominent example. This design collapsed both the input and the output into one conversational flow, and it is where the overwhelming majority of AI revenue concentrates. Chat works because the AI chooses the output format (text, code, chart, app) in real time, and the user never navigates to anything. There is relatively little to design. There is only the conversation. The team structure implications are stark. OpenAI runs what its own job postings describe as “a small team of product designers” serving a product with hundreds of millions of users. Vercel, which ships v0 and is building the AI-native web, currently lists 30 open engineering roles and exactly 1 open design role. When the interface is a text box that generates its own output, the ratio of designers to engineers approaches zero.

Nodes are for managing a repeatable generation pipeline. This is most prominently done by Flora, ComfyUI, LangFlow, and n8n. Where chat handles one generation at a time, nodes let you build a visible, inspectable workflow: step one feeds into step two, branches at step three, produces four variations at step four. This is the paradigm that creative agencies love because it preserves the canvas, the spatial environment designers were trained to think in. Nodes are mostly a better tool for reproducible AI workflows, and partly a refuge for designers who need a surface they recognize. Figma acquiring the node-based editor Weavy on October 30, 2025, and rebranding it as Figma Weave, tells you which direction the design tool incumbents think this is going. The senior person builds the flow while everyone else operates within it.

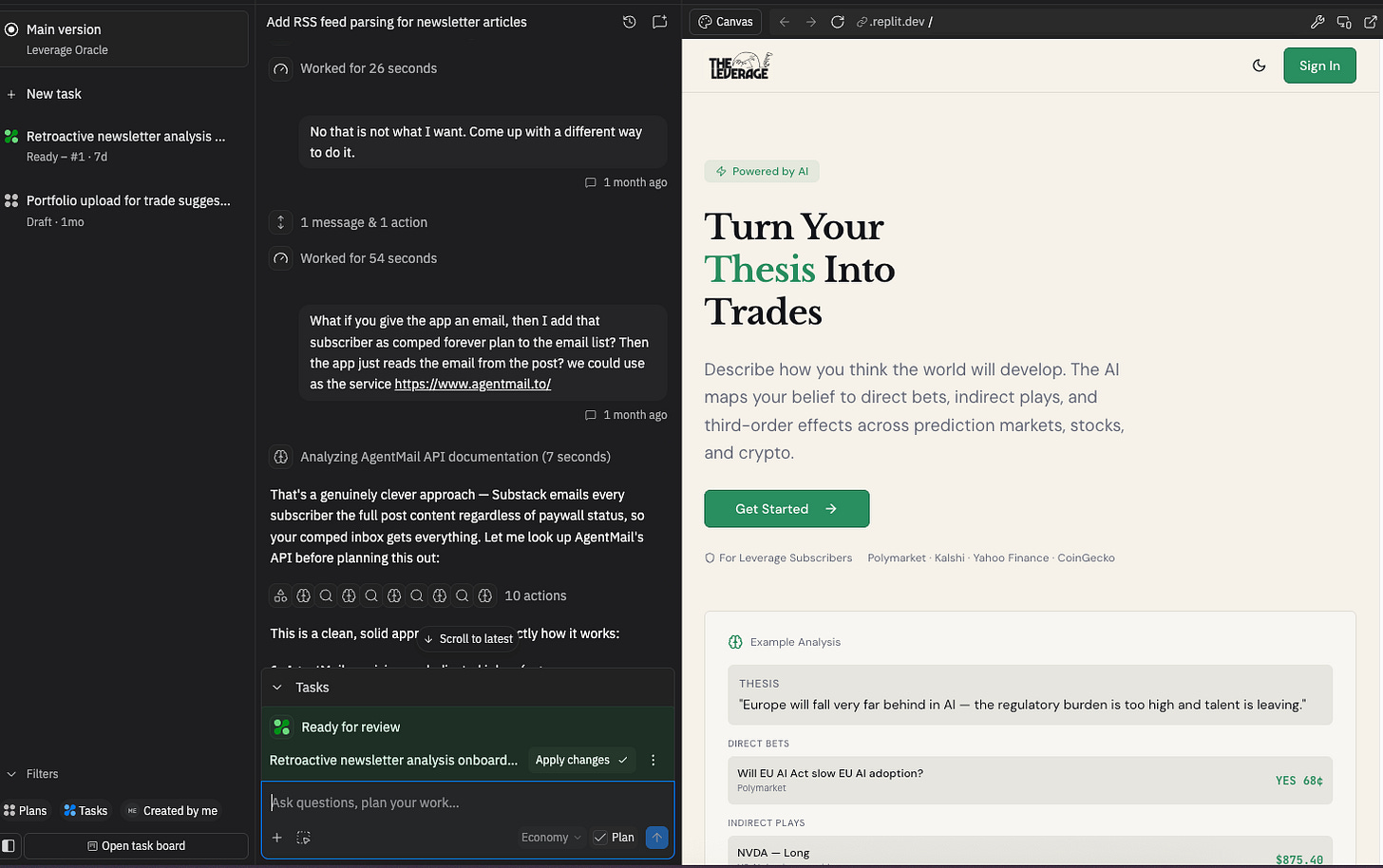

Kanban is for managing many agents generating in parallel. This will likely become the dominant paradigm of agents because it maps to how organizations already manage distributed work. It has already been embraced by Replit, Devin, and Cursor’s background agents. The user defines deliverables and tasks while multiple AI agents build in the background, all united by a kanban board to track progress. This is the paradigm that most directly threatens designers because it reframes their contribution as one task card among many, rather than the discipline that shapes the entire product.

Critically, none of these paradigms require the thing designers spent three decades building: customized, pre-designed screens. Agents do not use Figma. They consume APIs, design tokens, and system constraints. They ingest screenshots, brand books, and design systems, and then go off and make on their own. Last year AI agents could do the basic fundamentals of design with layouts. Increasingly, if prompted correctly and given the right playbooks, they can get a design 95% of the way there. The entire product process of the last decade, one where you built a Figma-to-engineering-handoff pipeline, assumed a human would look at the screen. When the user is an AI agent, there is no screen necessary.

If the input side of software has converged on a text box, and the output side is increasingly generated per query, the design discipline faces a question that goes beyond “will AI replace designers.” The real question is: when neither the input nor the output requires a human designer, where does design live?

The answer, it turns out, is neither. It lives in the rules that govern the AI.

Designers as Cops

I talked with Tanner Thelin, head of design at Leland about this problem and he put it in terms that are vivid, correct, and a little bleak:

Design in an AI-native company is about enabling the rest of the team to build in a way that feels consistent with your design system and brand. When product managers can ship code, and with the rise of product-minded engineering teams, designers need to make sure the systems are set up for those folks to prompt within the context of the design system. In that way, designers are basically just cops now.

The designer’s role becomes setting up the system so that PMs, engineers, and AI agents can build within it. The design system is prompting infrastructure. When Claude responds with a chart, something determines the chart’s colors and layout. When Lovable generates a landing page, something determines the component hierarchy and spacing. Right now, that “something” is often the AI’s default behavior, which is why so many AI-generated interfaces look generically the same. The designer’s job is to replace those defaults with intentional constraints.

The question is what this job looks like in practice, and how many people it requires. I found that OpenAI’s open Design Systems Manager role was indicative of what the future would look like. It requires technical fluency in React, CSS, and accessibility standards, plus experience building component libraries for multimodal experiences across text, voice, image, and video. That is an infrastructure role with a design title! One person, doing that job well, replaces an entire team of mid-level designers who used to craft individual screens.

The title often says design but the job description says engineer who cares about aesthetics. The barbell is not unique to design. Goldman Sachs found that unemployment among 20-to-30-year-olds in tech-exposed occupations has risen by almost 3 percentage points since early 2025, and Fortune reported entry-level hiring at the top 15 tech companies fell 25% from 2023 to 2024. But design has it worse. The barbell in engineering means fewer juniors and more seniors. The barbell in design means fewer people, period.

Still, this essay is not really about designers.

Design is the first creative knowledge profession where AI is eating both sides of the workflow at the same time. It is the test case. Every function with a text box on one end and a generated artifact on the other is about to run the same experiment, and most of them will get the same result. Copywriters then analysts then researchers, recruiters, paralegals, media buyers, and every other role where the input is a brief and the output is a deliverable. The design profession is just three years ahead of the rest of the knowledge economy on a curve that everyone else is about to climb.

What I found when I looked at how design is actually surviving is a clean bifurcation that applies to every function AI is about to hit. Two roles endure at the other end. One of them did not exist five years ago and is already the highest-leverage job in any AI-native company. The other one is older than software itself and is about to get the biggest pay raise of any role in tech. Everything in the middle — the job most knowledge workers reading this actually have — has about 18 to 24 months before the hiring freeze turns into the layoff wave.

Here is what each of the two surviving roles looks like, how companies are already restructuring around them, and how to tell which side of the bifurcation you are currently on.

What do knowledge workers of the future do?

Keep reading with a 7-day free trial

Subscribe to The Leverage to keep reading this post and get 7 days of free access to the full post archives.