The Labs Are Eating Everything

The Weekend Leverage, April 26th

While eating greasy slices of pepperoni pizza at dinner, my wife and I couldn’t agree on what skills our kid needs for the future. Whether you believe AI is a good thing or a bad thing, it is increasingly undeniable that it is at least a real thing. In a world with AI, what should we be doing differently as parents?

As we discussed, my daughter, rocking a self-selected outfit of neon pink leggings, clown-red shoes two sizes too big, and a blue tank top, was running around the restaurant. Three grannies having a gals’ night out protectively shielded the edges of their table so she wouldn’t poke out her eye. An Indian couple was smitten. Eventually my wife and I trailed off into silence and just watched the deeply kind, deeply human gestures strangers were extending toward our 18-month-old.

We couldn’t agree, because we simply don’t know. Would love to hear in the comments or in replies to this email how you’re thinking about this with your own kids.

This week’s news was the kind that reinforced how different the world will be in five years. But first, this newsletter is brought to you by Hapax.

Here’s the problem with every AI tool—they wait for you to be smart enough to use them. You prompt, you configure, you punch the air as they break and you can’t figure out why. The people who get value are the people who are already technical. Everyone else just kinda quits.

Hapax does something novel to fix this. It watches how your organization actually works, finds the repetitive garbage dragging your team down, and builds custom AI agents to handle it. They realized that the best AI setup process is one that doesn’t exist.

The team started by solving this for banks managing $90B+ in assets. The industry is regulated, non-technical, and has zero tolerance for “just write a better prompt.” That constraint forced them to build AI that finds the work and does it for you. Now they’re opening it up to everyone.

This is proactive AI. It builds AI for you. Use promo code HAPAXDEMO to get free credits for you to try it out.

MY RESEARCH

Tim Cook announced he’s stepping down as Apple CEO in September. Was he a genius or a failure? You’ll see two types of takes land in your feed this week. One says Cook is the greatest value creator of the last decade. The other says he’s the guy who stopped Apple from innovating. I have some thoughts, and the answer is weirder than either camp wants to admit. Watch here.

I spent six months watching my business die. One year ago this week I launched The Leverage, and it has been the hardest, most wonderful year of my life. I nearly went under in December with a young kid, my wife finishing a PhD, and bills coming. So I wrote up the financial results I’ve never shared publicly, the big ideas I got wrong, and what I’m betting on for year two. Read here.

WHAT MATTERED THIS WEEK?

BIG MODEL

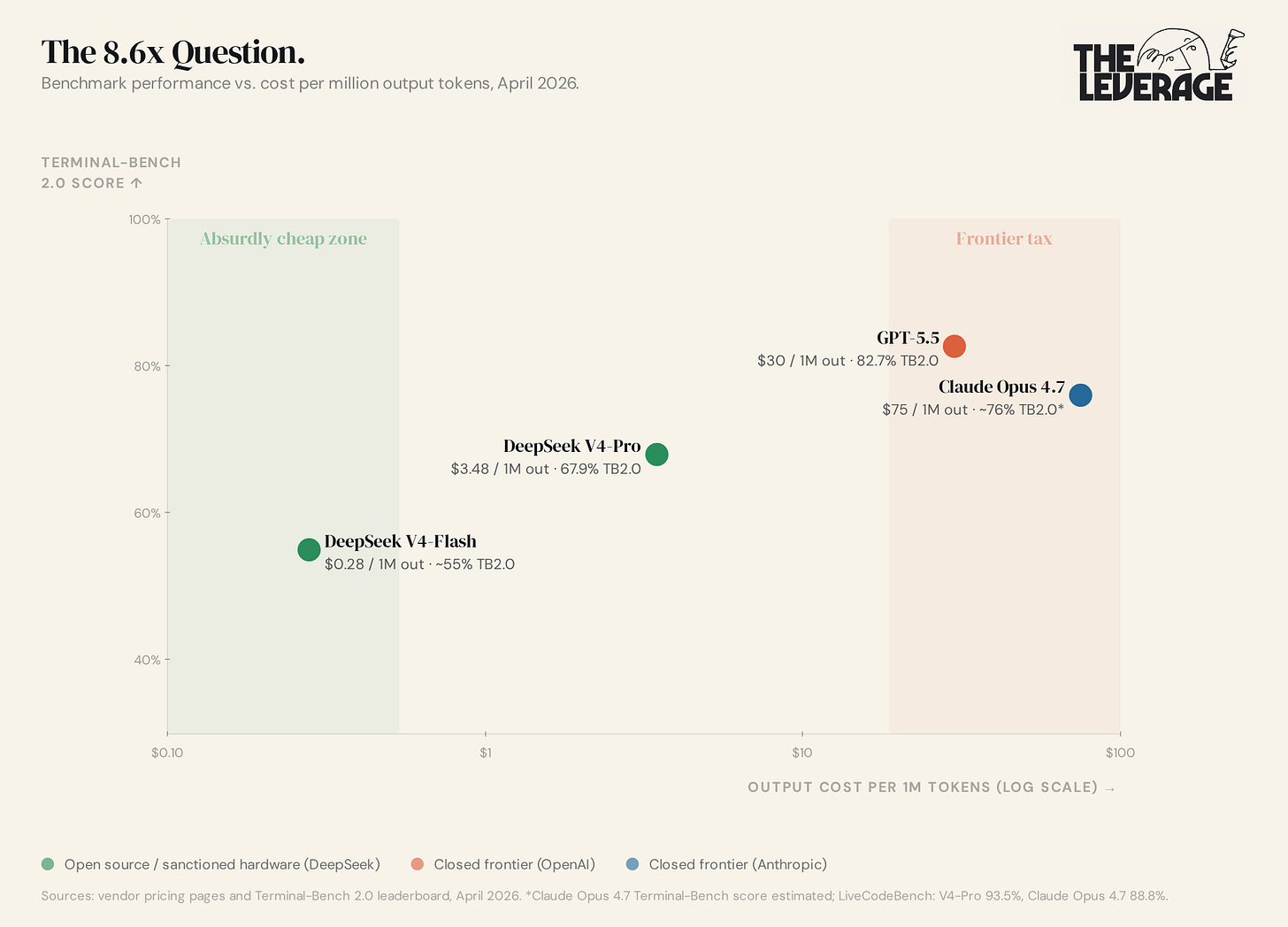

Is OpenAI back on top? Maybe? GPT-5.5 and open-weight model DeepSeek V4 dropped within 24 hours of each other this week, and at first glance Altman and Co would appear to have been cost-mogged. GPT-5.5 costs $30 per million output tokens. V4-Pro costs $3.48. Layer in DeepSeek’s 80-90% cache-hit discount and the effective gap stretches to 43-107x cheaper. Don’t be fooled by the numbers. Tokens are not, and will never be, the unit of value. What matters is what those tokens can actually do. A model priced higher per token that ships the same task in a third of the tokens is cheaper, not more expensive, and GPT-5.5 is tuned to spend fewer reasoning tokens reaching an answer. The same logic applies to the benchmarks. A model scoring 5% higher on a test does not give you 5% better outcomes in real work — that’s like believing every 5 lbs you add to your bench press gets you laid 5x more often.

In the case of AI, the things that make results possible are called the “harness.” This everything wrapped around the model to make it more functional for the user. This is overly simplistic, but think of the LLM as the engine, and the harness as the car wrapped around the engine. In this analogy, applications like ChatGPT are the steering wheel and pedals, aka the tools by which you drive the car.

More and more of the advances in AI are harness or app dependent, versus pure model magic. The model is still very, very important (every car needs an engine). But we are at a level of AI sophistication now that comparing GPT-5.5 versus V4-Pro in isolation is just plain wrong. You need to know the model, the harness, and the application. That is annoying and complicated and very hard to do, but thus is life.

So in that context, how should we interpret these releases? After reading through the 5.5 release notes, and also considering Mythos model further, the destination for these companies is obvious—complete and total digital control. All of these models are being tuned towards “be very, very good at code.” The model uses those coding abilities to become an intelligence layer that can control every other application on a machine. This is already starting to happen. ChatGPT can read screens and open programs, Cowork drives a desktop, etc. The next layer down is browsers, where the agent becomes the user navigating the web. Again, this is already happening. After that the operating system: Apple Intelligence, Windows Copilot, in-OS agents that route every action through a model before it ever touches an app. You guessed it, already being attempted. After that hardware. And while the big companies haven’t released anything yet, every indication is that it is coming in the next three years.

The trajectory is the same direction at every layer. So yes, GPT 5.5 is very good and yes, Deepseek is potentially very cheap, but if that is all you are thinking about, you are just thinking 3 months ahead. Go further.

BIG ACQUISITION

Elon Musk’s The Everything Company. This probably should’ve been a standalone essay, but I got a little trigger happy here (sorry).

So deal structure first. SpaceX gets a $60 billion call option on Cursor, payable in post-IPO stock. If SpaceX walks, Cursor keeps $10 billion with no dilution.

This deal makes good strategic sense for both parties.

Cursor’s top-line numbers are spectacular. They hit $2.7 billion ARR, 14x year-over-year, fastest B2B SaaS to $1B ARR ever, beating Slack by two years. The unit economics underneath are spectacularly awful. In January 2026, gross margin was negative 23%. Every $1 of revenue cost $1.23 in API spend to Anthropic and OpenAI. At $2B ARR, that’s $2.46B annualized flowing directly to the companies building competing products. This is like paying to send the guy planning to kill you to stabbing school. Cursor was funding its killers.

This is obviously untenable, so Cursor started to develop their own model line called “Composer.” The “proprietary model” turned out to be a Kimi 2.5 fine-tune with some RL on top, which if you have learned anything from my previous section, could work if the application and harness were sufficiently differentiated. Margins turned slightly positive since, but slightly could mean, like .2% or something. Meanwhile Claude Code and Codex ship better agents every month at a cost per token that Cursor can simply never match.

Now look at xAI. After the February merger with SpaceX (combined valuation $1.25T, largest merger in history), xAI has compute the rest of the industry would kill for and nothing to point it at. Grok’s coding performance is two tiers below Claude and GPT. They have all the chips, and none of the reasons to do it.

Together they solve each other’s problems. Cursor gets cheap compute to give their research team to actually train its own frontier model. xAI gets the highest-quality coding interaction data on earth pumped directly into its training pipeline. Coding model improves on coding product data, ships back into coding product, generates more data.

These people need each other. The call option also keeps Cursor founder-led and independent through the partnership. If a frontier model emerges, SpaceX exercises and acquires Cursor.

The interesting part of the deal isn’t what it does for Cursor or xAI individually. It’s what it does for the SpaceX IPO.

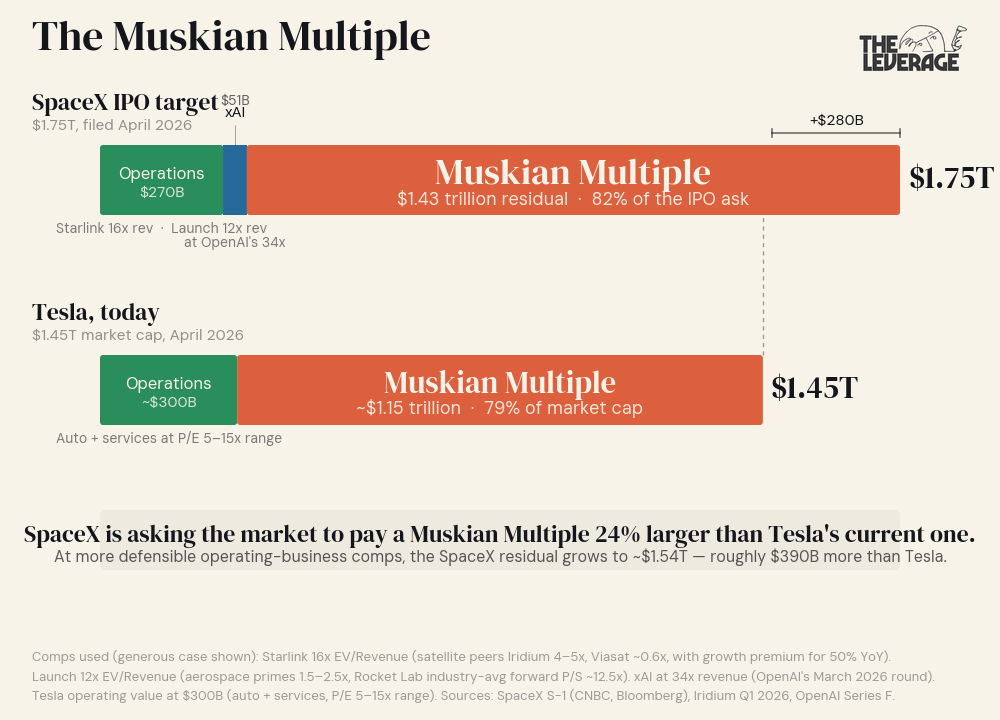

SpaceX filed S-1 confidentially April 1. Target: $1.75T valuation, $75B raise on the Nasdaq in June. 2025 revenue: $18.67 billion. The aggregate 93.7x multiple is meaningless on its own — SpaceX is three different businesses welded together. Sum-of-the-parts at honest comps is the only way to read this S-1.

The key stats for Starlink are: $11.4B revenue, 63% EBITDA margins, 10M subscribers, and 50% YoY growth. The right comp set here is satellite communications and growth telecom where Iridium trades at ~4-5x EV/revenue on $872M of trailing revenue growing 2%. Mature wireless telecom (T-Mobile 2.5x, Verizon 1.5x) sits below that. Starlink obviously deserves a growth premium over any of these — but the right premium isn’t infinite. Apply 2-3x the Iridium multiple to reflect Starlink’s growth and monopoly position and you get a defensible range of 8-12x. Generous: 15-18x. So say at 16x, probably should be about a $182B.

The launch business is at ~$7.3B revenue, with a near-monopoly on US launch capacity. Here the comp set isn’t as useful with the aerospace primes (Boeing ~1.5x, Lockheed ~2x, Northrop ~2.5x) anchoring the floor; while high-growth pure-play comp is Rocket Lab, where the forward P/S is 53x against an industry average of 12.5x. The 12.5x industry average is the more honest ceiling. ULA was sold at 2-3x. Defensible range for SpaceX Launch: 4-8x. Generous: 10-13x. At 12x: $88B.

That gives operating-business value of $158B at defensible comps, $270B at generous comps.

Now xAI. The right comp is AI labs, not aggregate SpaceX. Anthropic is at $800B on $30B revenue to trade at 26.7x. OpenAI’s March 2026 round closed at $852B on a $25B annualized revenue run rate, or 34x. xAI’s revenue is small — public reporting puts the run rate at roughly $1-2B between API and X Premium. Apply OpenAI’s generous 34x to $1.5B and xAI on its own performance is worth ~$51B.

Stack the operating businesses on top of xAI at fair AI-lab comp value: $209B at defensible, $321B at generous.

The IPO target is $1.75T.

The gap is $1.43-1.54T. That’s the residual the prospectus needs to fill, and there is no operating-business comp on earth that fills it. xAI would need ~$50-57B of revenue at Anthropic’s multiple to justify the residual on AI-lab comps alone. That’s roughly 30-40x its current run rate, which would require xAI to leapfrog Anthropic and OpenAI in the next two years. That feels, uh, unlikely.

I did this math a couple of times, because I felt like I was missing something. There are many, many, many investors who are going to excitedly buy in at the 1.75 trillion price.

So what is happening? I call it the Muskian Multiple — the premium the market pays for the assumption that Musk personally bends reality itself so that the multiple makes sense outcomes that comp pricing cannot price.

This is real thing by the way! Not a joke! Tesla is proof of it. Tesla trades at $1.45T market cap and a TTM P/E around 371x against traditional automakers at 5-15x. Toyota, the world’s largest automaker by volume, is worth less than a third of Tesla. Stripped to comp pricing, Tesla’s auto business is plausibly worth $200-300B. The remaining ~$1.2T is the Musk premium — a market bet that this specific operator will execute on robotaxi, Optimus, FSD, and energy storage at outcomes nobody else could deliver. The premium has held for years. It has also drawn down 50%+ multiple times. It is a real, durable, but volatile component of valuation.

The SpaceX IPO is asking for roughly the same Muskian premium SpaceX needs — about $1.4-1.5T — that Tesla currently carries on top of its auto business. In other words: the prospectus assumes the public market will pay full Tesla-grade Musk premium for SpaceX, on top of fair value for rockets, broadband, and an AI lab.

Reasonable people can disagree on whether Musk earns this premium but it certainly exists.

This is where Cursor matters. The Muskian Multiple is easier to underwrite when you can point at concrete AGI exposure. xAI on its own cannot tell that story credibly. Coding models are sub-frontier. The bench is depleted. The product surface is one chat box embedded within a smaller social media site. Buying the most successful application-layer AI product on earth — at the exact moment coding-agent revenue is the most legible proof AI is automating real labor — gives the prospectus something tangible to point at. Without Cursor, the IPO is selling $1.4T of pure Musk premium. With Cursor, it’s selling a Musk premium attached to an actual AGI thesis bankers can syndicate.

Hilariously, part of the reason this deal is structured as a call option, is because if they fully executed the transaction, they would’ve needed to refile the IPO. So yes, the acquisition that helps justify the IPO can’t actually be marketed as part of the initial security. What a world.

The Cursor deal is not an acqui-hire with optionality. It is a load-bearing beam in the IPO pitch.

Taking a step further back, Cursor’s 60 billion outcome, despite being gross margin negative for essentially its entire existence, is an enormous, expensive vote on the thesis we’ve been discussing this whole newsletter. Coding is the wedge by which to control the universe.

Combine with last week’s piece on Anthropic and Figma and the pattern is loud. Anthropic vertical into design. xAI vertical into coding via Cursor. The model providers have decided selling tokens to application companies leaves most of the value on the table, and they will eat entire SaaS categories whole to capture it. The blast zone isn’t one or two companies. It’s every workflow a model can plausibly do end-to-end. That’s most of them. If you run a vertical SaaS business sitting between a model and a knowledge worker, you’re now in the path of a vertical-integration play, not a partnership conversation.

Which brings me to the part you should sit with personally. If model providers will integrate vertically into coding — paying $50B to do it — and into design, they will integrate vertically into your function too. Computer-use agents are not a feature. They are a phase change. When a model opens the apps you open, clicks what you click, and produces the artifacts you produce, the question stops being “what tools will my employer buy” and starts being “what does my employer need a person for.” This is one of those trite sentences tech people say. I mean each word to the fullness of its definition. Automated computer use is a fundamentally different world. Plan accordingly.

TASTEMAKER

Noah Kahan’s new album has got the juice. As a white guy who enjoys fly fishing, living in New England, and lives with a low-grade state of yearning, I am squarely within the Kahan’s Ideal Customer Profile. And damn it all, but I can’t help but fulfilling this stereotype. I love this guy. You probably heard Stick Season a few years ago, and his new album is a strong follow up. It doesn’t quite hit the same soaring heights as his previous album, but I still found myself sniffling to the lyrics as I drove around Boston this weekend. (If anyone has tickets to his show at Fenway this summer to give me, that would be much appreciated). Check out the opening track, The End of August.

Go and be kind this week,

Evan

Sponsorships

We are now accepting sponsors for the Q2 ‘26. If you are interested in reaching my audience of 34K+ founders, investors, and senior tech executives, send me an email at team@gettheleverage.com.